This episode explains that AI only delivers real ROI when organizations invest in upskilling their people—not just deploying tools. Many companies expect immediate productivity gains from AI, but without the skills to use it effectively, the impact stays low. The real “profit engine” comes from enabling employees to work differently with AI: improving decision-making, accelerating execution, and redesigning workflows. Upskilling turns AI from a passive assistant into an active driver of business value, helping organizations unlock measurable outcomes instead of just surface-level adoption.

You invest in AI, but you see little change in your business. Many organizations share your frustration. Studies show that up to 95% of AI pilots never deliver measurable ROI. You might notice your team completes ai training, but you still wait for real results. The problem is clear: traditional metrics miss the mark. You need to focus on time saved, fewer mistakes, and smooth ai adoption in daily work. With The AI Profit Engine, you can finally link training to true business impact.

Key Takeaways

- Align AI training objectives with your business goals to avoid wasted resources.

- Encourage collaboration across departments to ensure AI projects deliver collective value.

- Establish clear ownership for AI initiatives to maintain accountability and momentum.

- Integrate AI training into daily workflows for immediate and measurable impact.

- Set specific, measurable objectives before launching AI training to track success.

- Gather feedback regularly to adapt and improve AI solutions over time.

- Ensure high-quality data governance to support reliable AI outputs and maintain trust.

- Use a self-assessment checklist to identify gaps and prioritize improvements in AI training.

Why AI Training Fails ROI

You want to see real business impact from your AI investment, but too often, the results fall short. Many organizations face the same problem. The reasons go deeper than just technology. You need to look at how your teams set objectives, work together, and measure success.

Common Causes of Zero ROI

Misaligned Objectives

When your AI training does not connect to your business outcome, you risk wasting time and money. Many companies start AI initiatives with good intentions, but they focus on technology instead of solving real problems. This leads to inconsistent results and missed opportunities for measurable business outcomes.

| Statistic | Percentage |

|---|---|

| Companies reporting minimal revenue or cost improvements | 60% |

| Companies seeing no measurable returns | 74% |

| Companies reporting EBIT gains from AI | 39% |

You can see that most companies do not achieve measurable returns because their objectives do not match their business goals. If you want to unlock the value of AI, you must align your strategy with the needs of your business.

Siloed Implementation

AI projects fail when teams work in isolation. Different departments often chase their own key performance indicators, which leads to misaligned incentives. You might see individual teams succeed, but these wins do not add up to real business value. Organizational alignment is key. Without it, your AI adoption will not deliver the impact you expect.

- Teams optimize for their own KPIs, not the company’s goals.

- Murky attribution makes it hard to see which actions drive business value.

- Misaligned incentives mean individual wins do not help the whole business.

Ownership Gaps

Ownership gaps create confusion and slow progress. When no one takes clear responsibility for AI projects, you lose momentum. Managers may become skeptical, and employees feel frustrated with tools that do not fit their needs. This lack of accountability can stop your AI success before it starts.

The Cost of Failure

The cost of failed AI projects is high. Companies often invest millions, but see little measurable ROI. The financial loss is only part of the problem. You also face organizational friction, employee frustration, and leadership hesitance to fund future AI initiatives.

| Financial Investment Range | Measurable Value Realized | Value Destruction Rate |

|---|---|---|

| $50-100 million | Less than $5 million | 90% |

When you do not see measurable impact, your teams lose trust in new technology. Leaders expect quick results, but real business value can take months or even years to appear. If you focus only on participation rates or completion metrics, you miss the true value of AI. You need to measure time saved, errors reduced, and workflow improvements to see the real impact.

Tip: Shift your focus from how many people complete AI training to how much time and value your teams gain in their daily work. This approach helps you track measurable ROI and build a stronger case for future AI investment.

AI-driven training works best when you connect it to real business problems and track measurable business outcomes. When you align your initiatives with business goals and measure the right metrics, you unlock the true value of AI and set your organization up for long-term success.

No Business Outcome Link in AI Training

Why Alignment Drives ROI

You want your investment in AI to deliver real business value. The key is to connect your ai training directly to business outcomes. When you align your training with the problems your teams face every day, you see a clear impact on productivity and efficiency. Many organizations miss this step. They focus on generic skills or technology features, not on the actual business impact.

A strong link between training and business goals helps you measure the true value of your efforts. You can track how much time your team saves, how many errors they avoid, and how quickly they complete important tasks. The AI Profit Engine uses this approach to show the real impact of ai adoption.

| Value Pillar | KPIs | Conversion to Business Impact |

|---|---|---|

| Time | Time-to-skill, time-to-productivity | Translate days saved into dollar values |

| Capacity | Throughput per FTE | Measure efficiency gains |

| Capability | Knowledge-check gains | Assess skill improvements |

| Risk | On-time compliance | Evaluate adherence to standards |

You can also use a simple process to connect learning to business results:

- Track learning activity versus business outcomes.

- Use controlled pilots to establish causality.

- Implement a baseline for comparison.

When you measure these factors, you see how your solution creates value for your business.

Fix: Start with High-Value Problems

You need to start with the right problem. Focus your ai training on high-value business challenges. This approach leads to faster and more visible impact. For example, finance teams using The AI Profit Engine have reported a 70% to 80% reduction in manual data entry time. Error rates in reconciliation dropped by 90%. Operations teams saw faster time-to-diagnosis and fewer workflow bottlenecks.

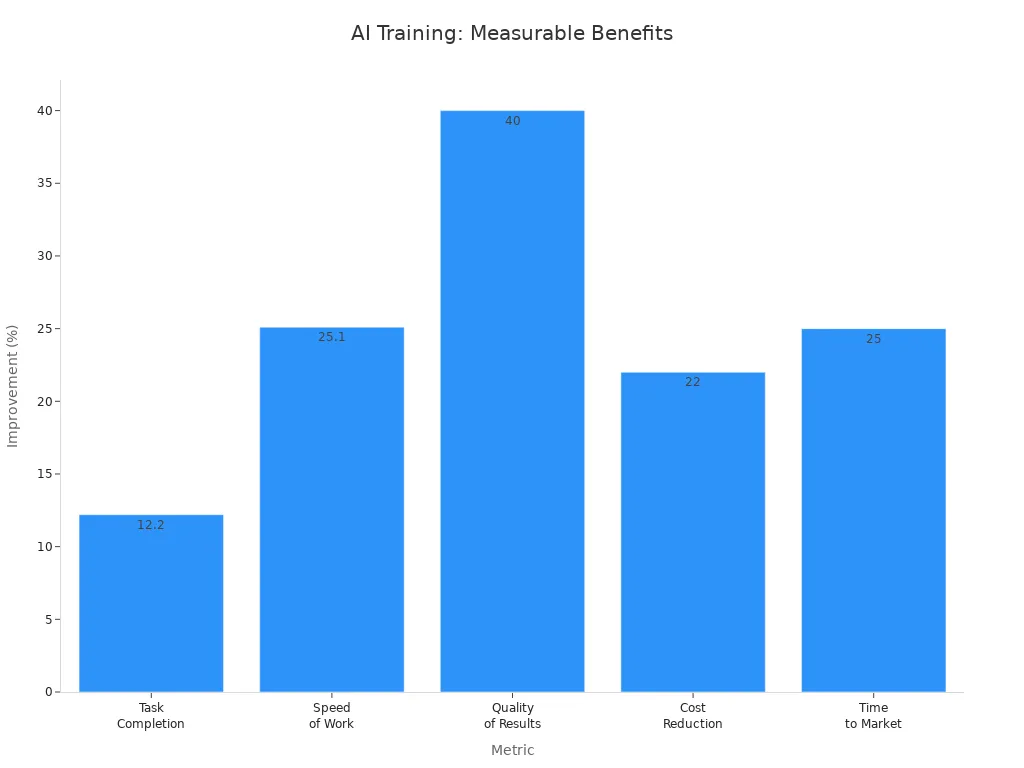

| Metric | Improvement |

|---|---|

| Task Completion | 12.2% more tasks completed |

| Speed of Work | 25.1% faster |

| Quality of Results | 40% higher quality |

| Cost Reduction | 22% reduction in cost per asset |

| Time to Market | 25% faster time to market |

“The return on investment for data and AI training programs is ultimately measured via productivity. You typically need a full year of data to determine effectiveness, and the real ROI can be measured over 12 to 24 months.” – Dmitri Adler, Co-Founder of Data Society

Set Measurable Objectives

Set clear, measurable objectives before you launch your training. Decide which business metrics matter most. These could include time saved, error reduction, or workflow acceleration. Establish a baseline so you can compare results after the training.

Engage Stakeholders Early

Bring key stakeholders into the process from the start. When leaders and team members help define the goals, you get better buy-in and faster results. Stakeholders can help identify the most valuable problems to solve and ensure the solution fits real business needs.

By linking your ai training to business outcomes, you create a direct path to value. You see the impact in time saved, fewer errors, and smoother workflows. This approach turns your investment into measurable business results.

Poor Integration with Workflows

You want your AI investment to drive real business value, but poor integration with daily workflows often blocks your progress. Many organizations treat ai training as a one-time event, separate from the actual work employees do. This approach creates gaps between learning and doing, which reduces the impact of your efforts.

Siloed AI Training Symptoms

You may notice several warning signs when ai training happens in isolation from core business processes:

- Inconsistent customer experiences can result when departments send mixed messages.

- Misaligned goals often appear as teams focus on their own targets instead of the company’s overall success.

- Low employee morale grows when staff feel disconnected and communication breaks down.

- Inability to react quickly to changes becomes a problem when data and insights stay trapped in silos.

These symptoms show that your solution is not reaching its full potential. You need to break down these barriers to unlock the true value of ai adoption.

Fix: Embed AI in Daily Work

You can achieve maximum impact by weaving ai into the fabric of everyday tasks. Companies that embed ai in daily work see faster returns and stronger business outcomes. For example, Volkswagen and other leading organizations have reported that this approach drives ROI much more effectively. Metrics like deflection rate and time returned show that ai can deliver value in weeks, not months. McKinsey found that embedding generative ai in service operations reduced agents’ knowledge-lookup handle time by 65%. Most companies reinvest productivity gains into upskilling and expanding ai capabilities, rather than cutting headcount.

Role-Based Training

You get better results when you tailor ai training to specific job roles. Research shows that role-based training leads to a 50% improvement in outcomes. Employees engage more and adopt new tools faster when the learning matches their daily responsibilities. Companies that customize ai learning paths see greater impact on workflow efficiency and business results. The AI Profit Engine uses this approach to build role-based capabilities that fit your unique needs.

Adoption Over Awareness

You should focus on adoption, not just awareness. Teaching employees about ai is not enough. You need to help them use ai tools in real scenarios. Companies that measure innovation ROI with clear metrics see better results. In fact, 68% of organizations with strong tracking report higher returns. Responsible ai initiatives also enhance returns and efficiency, with 58% of organizations seeing positive impact. Growth-focused companies are more likely to track ai value rigorously.

Tip: Make ai part of your team’s daily routine. Track how it changes the way people work, not just how many complete the training.

When you embed ai in daily workflows, you turn your investment into measurable business value. You see faster impact, higher engagement, and a stronger case for future ai adoption.

Unclear Ownership in AI Investment

Why Ownership Matters for ROI

You want your ai training to deliver real business value. Clear ownership is essential for this goal. When no one takes responsibility for ai projects, you see confusion and slow progress. Teams may not know who should make decisions or track results. This lack of clarity can block your ai adoption and reduce the impact of your investment.

Cross-functional teams help solve this problem. They bring together people from different parts of your business. Each member has a clear role and responsibility. This structure improves accountability and ensures that everyone works toward the same strategy. You see faster problem-solving and better results when teams share goals and metrics.

| Role | Responsibility |

|---|---|

| AI leaders | Responsible for AI/ML strategy and roadmap within the organization. |

| AI builders | Implement AI strategy to address business problems using machine learning. |

| Business executives | Solve business problems and drive revenue or reduce costs with AI. |

| IT leaders | Focus on the technology infrastructure, including data and analytics. |

When you align these roles, you create a strong foundation for ai projects. Shared metrics drive collective outcomes. This approach encourages innovation and efficient use of resources. You see a greater impact on your business and a clear path to value.

- Cross-functional teams enhance collaboration among diverse roles.

- They align goals across departments, fostering a sense of shared responsibility.

- Shared metrics drive collective outcomes, improving accountability.

- This structure encourages innovation and efficient resource use, leading to faster problem-solving.

Fix: Build Cross-Functional Teams

You can boost the impact of your ai investment by building cross-functional teams. Start by bringing together leaders from business, IT, and data science. Make sure each person understands their role and how it connects to the overall strategy. This team should meet regularly to review progress and adjust plans as needed.

Leadership Buy-In

Leadership buy-in is critical. When leaders support ai projects, teams feel empowered to act. Leaders set the tone for collaboration and help remove barriers. They also ensure that incentives match the goals of the project. Well-structured incentives improve decision outcomes and performance. Keep your incentive structures simple and easy to understand. Offer different levels of rewards to engage all partners. Communicate clearly and often to keep everyone informed.

Accountability Structures

Accountability structures keep your team focused. Use shared metrics to track progress and measure value. Regularly evaluate and adjust your incentive programs based on performance data. You can use ai to analyze historical data and optimize your incentive structures. Partner management technology can help streamline these processes and improve efficiency.

Tip: Align incentives with partner goals to ensure mutual benefit. This approach builds trust and drives better results for your business.

When you build cross-functional teams with clear ownership, you unlock the full value of your ai training. You see faster impact, stronger collaboration, and measurable business results.

Lack of Iteration in AI Projects

One-and-Done Training Fails

You may think that a single round of training will set your team up for success. In reality, most ai projects fail when you treat training as a one-time event. AI changes quickly. Your business needs and workflows shift over time. If you do not update your approach, your solution will lose its value. Teams often see early gains, but these fade without ongoing attention. You need to keep learning and adapting to maintain your impact.

Many organizations launch ai projects with excitement, but they do not plan for what comes next. They miss out on valuable feedback from users. They do not track how well the solution fits real business needs. As a result, the impact drops, and the project stalls. You must avoid this trap by building iteration into your strategy from the start.

Fix: Iterate with Feedback

You can boost your results by making iteration a core part of your ai approach. This means you gather feedback, monitor performance, and make changes as needed. Ongoing monitoring helps you spot problems early and keep your solution aligned with your business goals.

- Monitor key performance indicators during deployment.

- Reassess your solution regularly to catch performance drift.

- Integrate user feedback into your evaluation process.

You should also set up regular check-ins. At each interval, confirm that your deployment matches the approved use case. Make sure your data and workflows have not changed. Check that your ai solution still delivers the expected benefits. Watch for new risks or compliance needs.

Human-in-the-Loop

Adding human expertise at key points in your process makes your ai more reliable. Human-in-the-loop methods balance efficiency with expert quality control. They help you ensure that outputs match your business priorities and meet regulatory standards. Experts can spot issues that automated systems may miss. This approach leads to better accuracy, faster processing, and higher savings.

| Metric | Result |

|---|---|

| Accuracy | Increased to 99.9% |

| Processing Speed | Increased by up to 5x |

| Annual Savings | Thousands saved |

| Compliance | Enhanced compliance |

| Productivity | Focus on complex tasks |

| Competitive Edge | Clear advantage gained |

You gain a clear advantage when you combine ai with human judgment. Your team can focus on complex tasks while the system handles routine work.

Continuous Improvement

Continuous improvement keeps your ai projects on track. You need to review results, gather feedback, and make small changes often. This process helps you adapt to new business needs and changing conditions. It also ensures your strategy stays effective over time.

- Track performance indicators.

- Collect feedback from users.

- Adjust your solution based on what you learn.

Tip: Make iteration part of your culture. Encourage your team to share feedback and suggest improvements. This habit will help you get the most out of your ai investment and keep your business moving forward.

Data Issues Hurt Return on Investment

Data Quality and Governance

You want your AI projects to deliver strong business results. Data quality and governance play a critical role in this process. When you overlook these areas, you risk silent failures that can damage trust, security, or compliance without warning. Many organizations struggle with inaccurate, incomplete, or poorly labeled data. You may also find data trapped in silos or see inconsistencies across systems. These problems often go unnoticed until they cause real harm.

- Silent failures can erode trust and compliance.

- Inaccurate or incomplete data leads to unreliable AI outputs.

- Data silos and inconsistencies slow down your business.

Nearly half of chief operations officers say data quality is a top concern. Poor data quality can cost organizations over five million dollars each year. Stale, duplicated, or incorrect information not only reduces the impact of your AI but also undermines user trust. You need to address these issues early to protect your investment.

Note: Poor data quality can lead to ineffective AI outputs and lost business opportunities.

Fix: Data Readiness Checklist

You can boost your AI ROI by following a data readiness checklist. Start by investing in clean, accessible, and well-governed data. Make sure your data sets are accurate and up to date. Align your workflows with compliance standards to protect privacy and security. Integrate security measures like zero-trust principles and data loss prevention tools. These steps help you avoid costly mistakes and keep your business safe.

At the heart of every successful AI project lies high-quality data. You need strong data governance frameworks that enforce standards, automate cleansing, and ensure smooth integration across your systems. This foundation supports accurate predictions and reliable insights.

Infrastructure for Scale

Your business needs infrastructure that can handle growth. Build systems that scale with your data needs. Automate data cleansing and validation to keep your information accurate as you expand. Seamless integration across platforms ensures your AI continues to deliver value as your business grows.

Shadow AI Risks

Shadow AI introduces hidden risks that can hurt your ROI. These unsanctioned tools often bypass security and governance controls. The cost of a data breach from shadow AI can reach $4.63 million per incident, compared to $3.96 million for standard breaches. Shadow AI accounts for 20% of all data breaches, with detection taking up to 247 days and remediation lasting 94 days.

| Aspect | Standard Data Breach Cost | Shadow AI Data Breach Cost | Cost Premium |

|---|---|---|---|

| Average Cost per Incident | $3.96 million | $4.63 million | $670,000 |

| Percentage of Total Data Breaches | 13% | 20% | N/A |

| Average Detection Time | N/A | 247 days | N/A |

| Median Remediation Time | N/A | 94 days | N/A |

You can reduce these risks by enforcing strict governance and monitoring for unauthorized AI use. Protect your business by making data readiness a priority.

Tip: Regularly review your data processes and security measures to maintain trust and maximize the impact of your AI investments.

Diagnose Your AI Training ROI

You want to know if your AI training delivers real value. A self-assessment helps you spot gaps and measure progress. Many AI projects fail because teams do not check their starting point or track changes over time. You can use a simple checklist to see where your business stands.

Self-Assessment Checklist

Start by reviewing your current approach. Use these tools to get a clear picture of your strengths and weaknesses:

| Diagnostic Tool | Purpose |

|---|---|

| Self-Assessment | Establishes an awareness baseline of current AI skills within the organization. |

| Performance-Based Testing | Measures actual capabilities through practical tasks. |

| Manager Assessment | Evaluates applied performance from a managerial perspective. |

| Work Product Analysis | Validates production quality and effectiveness of AI skills in real work outputs. |

Ask yourself:

- Do your teams use AI in their daily work?

- Can you measure the quality of their output?

- Does your data support accurate and reliable results?

- Are managers confident in the impact of AI on business outcomes?

If you answer "no" to any question, you have found an area for improvement.

Using Results for Improvement

You can turn your findings into action. Focus on the areas that matter most for your business. Use your results to guide your next steps and boost your ROI.

Prioritize Fixes

Not all problems need fixing at once. Start with the biggest gaps that block your progress. Research shows that people who set clear priorities based on self-assessment reach their goals faster. They use their resources wisely and plan for the best time to act.

| Evidence Description | Findings |

|---|---|

| Effective self-regulators prioritize difficult goals based on self-assessment of resources. | They believe their resources are greater earlier in the day, influencing their planning behavior. |

| Study 1 shows that plans to exercise are influenced by self-regulatory effectiveness and perceived difficulty. | Higher self-regulatory effectiveness leads to earlier exercise plans for those who find it difficult. |

| Positive correlation between self-regulatory effectiveness and beliefs about resource availability. | Effectiveness is associated with the belief that resources are greater at the beginning of the day (r = .21, p = .04). |

You can use this approach for your AI projects. Fix the most important issues first. This strategy helps you see faster impact and keeps your team motivated.

Track ROI Gains

You need to measure your progress. Use these methods to track gains after you make improvements:

- Adoption & Usage Metrics: Count how many people use AI tools and how often.

- Productivity & Efficiency Metrics: Measure how fast tasks get done and how many errors you avoid.

- Business Outcome Metrics: Check if you save money, grow revenue, or improve customer satisfaction.

You can also look at:

- Adoption rate: How many users rely on AI for decisions.

- Decision quality: How well AI improves choices.

- Time to decision: How quickly teams act with AI insights.

- User confidence: How much trust users have in AI answers.

- Business outcomes: The overall effect on your business.

Productivity is the main way to judge success. Real ROI takes time to measure. You may need 12 to 24 months to see the full impact. Stay patient and keep tracking your results. This approach helps you avoid poor data quality and ensures your data supports your goals.

Tip: Review your data and results often. Adjust your strategy as you learn. This habit will help your AI projects deliver lasting value for your business.

You have seen why many AI training programs miss the mark. When you start with a solution instead of a clear problem, you lose impact. You need to embed AI into your business workflows and focus on real outcomes.

- Integrate AI into daily decisions for lasting impact.

- Validate results by solving real business problems.

| Benefit Type | Description |

|---|---|

| Growth Enablement | AI unlocks new markets and capabilities for your business. |

| Productivity Boost | Human talent and AI together raise accuracy and profitability. |

Use the self-assessment tool to find gaps and take action. Start now to transform your business and achieve measurable impact.

🚀 Want to be part of m365.fm?

Then stop just listening… and start showing up.

👉 Connect with me on LinkedIn and let’s make something happen:

- 🎙️ Be a podcast guest and share your story

- 🎧 Host your own episode (yes, seriously)

- 💡 Pitch topics the community actually wants to hear

- 🌍 Build your personal brand in the Microsoft 365 space

This isn’t just a podcast — it’s a platform for people who take action.

🔥 Most people wait. The best ones don’t.

👉 Connect with me on LinkedIn and send me a message:

"I want in"

Let’s build something awesome 👊

1

00:00:00,000 --> 00:00:04,620

Companies buy AI licenses, rollout training, celebrate completion rates, and then watch

2

00:00:04,620 --> 00:00:09,260

the same teams copy data by hand, chase status updates in email and rebuild reports every

3

00:00:09,260 --> 00:00:10,260

month.

4

00:00:10,260 --> 00:00:11,260

That's the waste pattern.

5

00:00:11,260 --> 00:00:12,260

The tool is there.

6

00:00:12,260 --> 00:00:15,860

The spend is real, but the work barely moves because the old model tracks exposure, not

7

00:00:15,860 --> 00:00:16,860

change.

8

00:00:16,860 --> 00:00:19,660

A badge doesn't tell you if a finance analyst closed faster.

9

00:00:19,660 --> 00:00:23,100

Attendance doesn't tell you if a project manager pushed a block decision through in half

10

00:00:23,100 --> 00:00:24,100

the time.

11

00:00:24,100 --> 00:00:29,060

And if you can't show reclaimed minutes, fewer errors or faster action, AI stays a cost

12

00:00:29,060 --> 00:00:29,980

line.

13

00:00:29,980 --> 00:00:32,540

In this video, I want to make the measurement model simple.

14

00:00:32,540 --> 00:00:36,700

Time-saved, speed gained, error reduced, not in theory, inside real tasks.

15

00:00:36,700 --> 00:00:39,300

That's where ROI starts to show up.

16

00:00:39,300 --> 00:00:40,660

The old model breaks.

17

00:00:40,660 --> 00:00:43,700

Why certification metrics don't show ROI?

18

00:00:43,700 --> 00:00:47,140

Most AI training programs still follow the compliance model.

19

00:00:47,140 --> 00:00:50,580

People attend a session, finish a module, maybe pass a quiz, and then leadership gets

20

00:00:50,580 --> 00:00:52,660

a dashboard full of neat numbers.

21

00:00:52,660 --> 00:00:56,460

Completion rate, participation, self-reported confidence, it looks tidy, and that's exactly

22

00:00:56,460 --> 00:01:01,540

the problem, because tidy metrics can hide messy reality, especially when the work itself

23

00:01:01,540 --> 00:01:04,620

hasn't changed at all after the training ends.

24

00:01:04,620 --> 00:01:09,120

What typically happens is simple, a team learns what a prompt is, sees a few generic demos,

25

00:01:09,120 --> 00:01:12,740

and then goes back to Outlook, Excel, Teams, and reporting workflows that still run the

26

00:01:12,740 --> 00:01:13,740

old way.

27

00:01:13,740 --> 00:01:17,700

The person may know more about AI than before, but the monthly report still takes too long.

28

00:01:17,700 --> 00:01:18,820

The handoff still breaks.

29

00:01:18,820 --> 00:01:22,340

The follow-up still depends on someone remembering to send it, so the gap isn't knowledge

30

00:01:22,340 --> 00:01:24,300

alone, it's task behavior.

31

00:01:24,300 --> 00:01:27,240

In one level deeper, this is where most firms lose the plot.

32

00:01:27,240 --> 00:01:30,100

They measure usage noise instead of work compression.

33

00:01:30,100 --> 00:01:34,540

Research in your source set points to a huge spread between typical users and power users,

34

00:01:34,540 --> 00:01:36,380

around 4-5 times in productivity.

35

00:01:36,380 --> 00:01:38,860

That means average adoption numbers tell you almost nothing.

36

00:01:38,860 --> 00:01:42,820

A company can say, "People are using AI," and still miss the fact that only a small group

37

00:01:42,820 --> 00:01:45,220

is turning that usage into faster output.

38

00:01:45,220 --> 00:01:48,020

That's why completion rates feel safe, but don't prove value.

39

00:01:48,020 --> 00:01:50,060

They tell you somebody finished training.

40

00:01:50,060 --> 00:01:54,180

They do not tell you whether a reporting pack moved from 20 minutes to 45 seconds for part

41

00:01:54,180 --> 00:01:57,140

of the process, which is one of the finance benchmarks in the research.

42

00:01:57,140 --> 00:02:01,980

They don't tell you whether employees are reclaiming around 9 hours per month on average,

43

00:02:01,980 --> 00:02:04,500

which shows up across multiple co-pilot studies.

44

00:02:04,500 --> 00:02:08,580

And they definitely don't tell you whether a decision now moves 8 times faster, or whether

45

00:02:08,580 --> 00:02:11,700

time to inside drop by 88% in a measured implementation.

46

00:02:11,700 --> 00:02:12,940

Those are outcome signals.

47

00:02:12,940 --> 00:02:13,940

Everything else is set up.

48

00:02:13,940 --> 00:02:15,180

There's another break in the model.

49

00:02:15,180 --> 00:02:19,540

A lot of pilots never establish a real baseline, so they can't prove change later.

50

00:02:19,540 --> 00:02:25,300

Which in your material shows 95% of enterprise AI pilots delivered no measurable P&L impact,

51

00:02:25,300 --> 00:02:30,100

not because the tools failed on their own, but because organizations skipped the hard parts.

52

00:02:30,100 --> 00:02:33,300

Workflow redesigned ownership and pre-help post measurement.

53

00:02:33,300 --> 00:02:37,180

If you don't know how long the task took before upskilling, you can't defend any claim

54

00:02:37,180 --> 00:02:38,500

after rollout.

55

00:02:38,500 --> 00:02:41,180

This clicked for me when I looked at how leaders talk about adoption.

56

00:02:41,180 --> 00:02:44,300

They often ask, "How many people used co-pilot this month?"

57

00:02:44,300 --> 00:02:46,660

That sounds reasonable, but it's the wrong question.

58

00:02:46,660 --> 00:02:50,740

The better question is, which task got shorter, cleaner, or faster to decide?

59

00:02:50,740 --> 00:02:53,340

Because using AI more often is not the same as compressing work.

60

00:02:53,340 --> 00:02:57,540

A person can prompt all day and still create rework, delays, and weak outputs that someone

61

00:02:57,540 --> 00:02:58,540

else has to fix.

62

00:02:58,540 --> 00:03:02,660

So training has to move out of the generic literacy bucket and into role-based capability.

63

00:03:02,660 --> 00:03:04,260

Not learn AI.

64

00:03:04,260 --> 00:03:06,740

Learn how to build a variance explanation faster.

65

00:03:06,740 --> 00:03:09,420

Learn how to turn meeting noise into action-ready follow-up.

66

00:03:09,420 --> 00:03:12,220

Learn how to cut ticket handling time without hurting quality.

67

00:03:12,220 --> 00:03:16,180

Once you drop the badge logic and move into workflow logic, ROI gets a lot easier to

68

00:03:16,180 --> 00:03:17,180

see.

69

00:03:17,180 --> 00:03:21,180

The measurement model, the reclaimed mini-frame work, so once you stop measuring training

70

00:03:21,180 --> 00:03:24,300

as attendance, you need a model that ties behavior to money.

71

00:03:24,300 --> 00:03:25,300

Keep it plain.

72

00:03:25,300 --> 00:03:30,260

ROI equals time-saved, multiplied by hourly cost and adoption rate, then add the value of

73

00:03:30,260 --> 00:03:34,460

fewer errors and faster decisions and subtract the upskilling investment.

74

00:03:34,460 --> 00:03:37,420

That's the whole structure, not perfect, but usable.

75

00:03:37,420 --> 00:03:41,620

And that matters more because most AI ROI discussions collapse when the math gets too abstract

76

00:03:41,620 --> 00:03:43,100

for managers to trust.

77

00:03:43,100 --> 00:03:46,740

Not even simpler, you're not buying AI, you're buying back work hours and you're trying

78

00:03:46,740 --> 00:03:49,820

to move those hours into work that matters more.

79

00:03:49,820 --> 00:03:54,060

Analysis Planning, escalation, customer response, better judgment.

80

00:03:54,060 --> 00:03:57,300

If the hours disappear into slack time, the value weakens.

81

00:03:57,300 --> 00:04:01,220

If those hours return to the business as faster output or better decisions, the value shows

82

00:04:01,220 --> 00:04:02,220

up.

83

00:04:02,220 --> 00:04:04,900

Start with reclaimed time because it's the cleanest signal.

84

00:04:04,900 --> 00:04:09,220

Measure hours saved per full-time employee per month but only inside named workflows.

85

00:04:09,220 --> 00:04:10,820

Not AI helped a bit.

86

00:04:10,820 --> 00:04:11,820

That's useless.

87

00:04:11,820 --> 00:04:12,820

Track one task.

88

00:04:12,820 --> 00:04:15,860

In data in Excel, drafting a monthly variance note.

89

00:04:15,860 --> 00:04:17,860

Building a meeting summary and action list.

90

00:04:17,860 --> 00:04:21,860

Then compare manual time against AI assisted time under the same conditions.

91

00:04:21,860 --> 00:04:23,980

Same role, same task, same level of complexity.

92

00:04:23,980 --> 00:04:25,700

That's how you avoid fantasy math.

93

00:04:25,700 --> 00:04:29,300

The thing most people miss is that averages can distort the picture fast.

94

00:04:29,300 --> 00:04:32,500

One person might say 15 minutes, another might save 2 hours.

95

00:04:32,500 --> 00:04:35,660

So don't begin with broad assumptions across the whole company.

96

00:04:35,660 --> 00:04:38,020

Begin at workflow level, then roll upward.

97

00:04:38,020 --> 00:04:42,340

If a finance analyst saves even a small amount on a task repeated many times each month,

98

00:04:42,340 --> 00:04:44,020

the reclaimed hours compound.

99

00:04:44,020 --> 00:04:47,700

Research in your source set shows that even modest monthly savings can justify the license

100

00:04:47,700 --> 00:04:50,780

fast when the employees hourly value is high enough.

101

00:04:50,780 --> 00:04:52,220

Then add decision velocity.

102

00:04:52,220 --> 00:04:55,900

This one matters because a lot of AI value doesn't show up as simple labor reduction.

103

00:04:55,900 --> 00:04:58,900

It shows up as less weighting between issue and action.

104

00:04:58,900 --> 00:05:02,460

Measure the cycle time from problem identified to next decision taken.

105

00:05:02,460 --> 00:05:04,860

In some teams that means from meeting to follow up.

106

00:05:04,860 --> 00:05:07,860

In others, from variance flagged to management response.

107

00:05:07,860 --> 00:05:12,820

Because this is easy to fake with vague stories, use pre-training and post-training timings.

108

00:05:12,820 --> 00:05:15,660

Same team, same type of decision, same approval path.

109

00:05:15,660 --> 00:05:17,500

You've got support for that in the research.

110

00:05:17,500 --> 00:05:22,100

Some organizations reported decisions eight times faster and time to inside down by 88%

111

00:05:22,100 --> 00:05:23,260

after AI adoption.

112

00:05:23,260 --> 00:05:26,620

Now you shouldn't throw those numbers around as universal promises because they depend

113

00:05:26,620 --> 00:05:28,340

on the workflow and the setup.

114

00:05:28,340 --> 00:05:32,140

But they do show what happens when AI reduces the friction between information gathering

115

00:05:32,140 --> 00:05:33,140

and action.

116

00:05:33,140 --> 00:05:36,940

And that's the part executives care about because slow decisions usually cost more than

117

00:05:36,940 --> 00:05:38,620

slow document creation.

118

00:05:38,620 --> 00:05:41,540

After that, choose one more metric based on the role.

119

00:05:41,540 --> 00:05:45,660

Arrow reduction works well in finance or compliance heavy flows, throughput works well in service

120

00:05:45,660 --> 00:05:46,860

or operations.

121

00:05:46,860 --> 00:05:50,340

Cost per decision can work for management processes where the same issue keeps dragging

122

00:05:50,340 --> 00:05:51,780

multiple people into the loop.

123

00:05:51,780 --> 00:05:53,540

The point isn't to create a giant dashboard.

124

00:05:53,540 --> 00:05:57,860

It's to match one quality or output measure to the workflow so speed doesn't hide bad work.

125

00:05:57,860 --> 00:06:01,300

Now baseline discipline, this is the part almost everyone wants to skip because it feels

126

00:06:01,300 --> 00:06:02,300

boring.

127

00:06:02,300 --> 00:06:06,020

And that's exactly why so many ROI claims fall apart later.

128

00:06:06,020 --> 00:06:10,260

Data training time the task count the rework capture how long the handoff takes then run

129

00:06:10,260 --> 00:06:14,980

the same test after targeted upskilling if the task changed noted if the volume changed noted

130

00:06:14,980 --> 00:06:19,340

if the data quality improved noted clean comparisons protect the whole case adoption still

131

00:06:19,340 --> 00:06:21,940

matters, but as a multiplier, not a trophy.

132

00:06:21,940 --> 00:06:26,380

A license that sits idle drags the model down research in your sources shows active seat

133

00:06:26,380 --> 00:06:31,020

utilization in large enterprises averages 55% which means a lot of paid capacity never

134

00:06:31,020 --> 00:06:32,500

enters the workflow.

135

00:06:32,500 --> 00:06:36,660

So don't ask only whether users logged in ask whether the trained behavior showed up repeatedly

136

00:06:36,660 --> 00:06:38,220

in the target task.

137

00:06:38,220 --> 00:06:41,740

That's the adoption signal worth tracking and one more distinction matters.

138

00:06:41,740 --> 00:06:46,420

Gross time saved is not the same as value created if an analyst saves an hour that our

139

00:06:46,420 --> 00:06:51,740

only matters when it moves into deeper analysis faster escalation better planning or more

140

00:06:51,740 --> 00:06:53,260

customer work.

141

00:06:53,260 --> 00:06:58,140

So the full model is really this reclaim the minute then redeploy it that's how AI stops

142

00:06:58,140 --> 00:07:02,820

looking like software spend and starts looking like operating leverage but where ROI shows up

143

00:07:02,820 --> 00:07:08,140

first the three roles with high ROI density once the model is clear the next question is

144

00:07:08,140 --> 00:07:12,860

where to start not with everyone not with the loudest department start where work is repetitive

145

00:07:12,860 --> 00:07:16,740

document heavy and packed with small decisions that slow everything around them that's where

146

00:07:16,740 --> 00:07:21,460

AI upskilling compounds you train one behavior it shows up dozens of times each week and

147

00:07:21,460 --> 00:07:26,100

the gain keeps stacking without adding more headcount finance analysts sit near the top

148

00:07:26,100 --> 00:07:30,580

of that list because so much of their week runs through structured data recurring commentary

149

00:07:30,580 --> 00:07:35,300

reconciliation and reporting cycles that punish manual effort they live in excel they clean

150

00:07:35,300 --> 00:07:40,140

inputs compare versions explain movement and turn raw numbers into something leadership

151

00:07:40,140 --> 00:07:44,900

can act on that's exactly the kind of work where role-based AI skills can cut drag fast

152

00:07:44,900 --> 00:07:48,660

because the friction usually isn't deep judgment is the work before judgment gathering

153

00:07:48,660 --> 00:07:53,820

shaping drafting checking rewriting in the research finance teams reports strongly savings

154

00:07:53,820 --> 00:07:58,620

and some pilots show reporting work dropping from long manual cycles to much faster first drafts

155

00:07:58,620 --> 00:08:02,540

that pattern matters more than anyone headline number analysts don't stop being analysts they

156

00:08:02,540 --> 00:08:06,620

stop spending so much time building the first version of the output when that shift lands

157

00:08:06,620 --> 00:08:13,220

well you see a real reclaim of capacity often in the 30% to 50% range for targeted tasks

158

00:08:13,220 --> 00:08:17,620

and close cycles start moving with less scramble at the end project managers are another dense

159

00:08:17,620 --> 00:08:22,380

ROI zone but for a different reason their work gets buried under coordination load status

160

00:08:22,380 --> 00:08:28,020

collection meeting notes action tracking risk logs follow ups stakeholder updates none of

161

00:08:28,020 --> 00:08:32,220

that is trivial and all of it creates delay when information sits in chats decks or half

162

00:08:32,220 --> 00:08:36,860

finished notes instead of moving into decisions so when pms get trained to use AI inside the

163

00:08:36,860 --> 00:08:41,900

workflow not as a side experiment the gain shows up in flow a weekly prep cycle that used

164

00:08:41,900 --> 00:08:46,980

to consume six hours can drop to two when summaries action extraction and draft updates happen

165

00:08:46,980 --> 00:08:51,980

inside the tools they already use more important the output arrives in a better state for action

166

00:08:51,980 --> 00:08:56,060

people don't need to reconstruct what happened in the meeting they can see the risk the owner

167

00:08:56,060 --> 00:09:01,140

the deadline and the next move that's why decision cycles can move 25% to 40% faster in these

168

00:09:01,140 --> 00:09:05,060

environments the speed doesn't come from typing quicker it comes from reducing the lag between

169

00:09:05,060 --> 00:09:09,340

discussion and execution then you have frontline operations and support where volume does most

170

00:09:09,340 --> 00:09:14,940

of the economic work these teams handle repeated questions repetitive communications knowledge retrieval

171

00:09:14,940 --> 00:09:19,020

case summaries and constant switching between systems small savings matter here because they

172

00:09:19,020 --> 00:09:24,220

happen all day if a support workflow drops from 12 minutes to seven that gap repeats across hundreds

173

00:09:24,220 --> 00:09:29,100

or thousands of interactions and suddenly throughput climbs without the usual answer which is hiring

174

00:09:29,100 --> 00:09:33,900

more people this is also where quality pressure gets real the team can't just move faster they have

175

00:09:33,900 --> 00:09:39,020

to stay within target stay accurate and keep response quality stable that's why these roles benefit

176

00:09:39,020 --> 00:09:43,820

from tighter prompt patterns and narrower use cases early on draft the response pull the right

177

00:09:43,820 --> 00:09:49,500

knowledge summarize the case let the human confirm the edge cases when that discipline is in place

178

00:09:49,500 --> 00:09:56,220

throughput gains in the 20% to 40% range start to look believable not inflated so the selection rule

179

00:09:56,220 --> 00:10:01,100

is simple don't spread training evenly across the org because that feels fair put it where AI

180

00:10:01,100 --> 00:10:06,460

compounds output through repetition documentation and decision load finance gives you measurable compression

181

00:10:06,460 --> 00:10:11,660

in analysis prep project management gives you faster movement through coordination operations and

182

00:10:11,660 --> 00:10:16,540

support give you volume gains with clear service metrics different roles same pattern the work moves

183

00:10:16,540 --> 00:10:21,020

with less friction because information arrives closer to decision ready and that's the lens to keep

184

00:10:21,020 --> 00:10:24,620

using as we move into examples because the signal gets stronger when you stop asking who

185

00:10:24,620 --> 00:10:29,900

use the tool and start asking which workflow got shorter proof in the workflow three concrete before

186

00:10:29,900 --> 00:10:34,220

and after cases so let's get specific because this is the point where a i roi either becomes

187

00:10:34,220 --> 00:10:39,820

defensible or turns into boardroom fog take finance first before targeted upskilling a monthly

188

00:10:39,820 --> 00:10:44,700

reporting cycle can stretch across five days not because the analysis itself takes five days

189

00:10:44,700 --> 00:10:49,580

but because analysts spend too much of that time pulling numbers together checking formatting drafting

190

00:10:49,580 --> 00:10:54,300

commentary and writing the same style of variance explanation again and again the trained behavior

191

00:10:54,300 --> 00:10:59,260

changes that sequence instead of starting from a blank page the analyst uses AI to draft the first

192

00:10:59,260 --> 00:11:04,460

pass of commentary summarize movement across categories and structure the explanation for review the

193

00:11:04,460 --> 00:11:09,020

close doesn't disappear the analyst still validates the numbers but the manual drag drops and the

194

00:11:09,020 --> 00:11:13,980

cycle can move from five days to two the real gain is what happens next those recovered hours don't go

195

00:11:13,980 --> 00:11:18,860

back into prettier reporting they go into scenario planning exception review and faster follow-up

196

00:11:18,860 --> 00:11:23,260

with decision makers now look at project management the baseline problem usually isn't lack of effort

197

00:11:23,260 --> 00:11:28,220

its fragmentation notes in one place actions in another risks buried in a meeting nobody has time

198

00:11:28,220 --> 00:11:33,420

to rewatch a pm can easily spend six hours a week just getting status into a usable form after

199

00:11:33,420 --> 00:11:38,380

roll based upskilling the workflow changes meeting notes get summarized in a consistent pattern actions

200

00:11:38,380 --> 00:11:42,860

are pulled out with owners and deadlines risks and blockers surface earlier because the pm isn't

201

00:11:42,860 --> 00:11:47,660

reconstructing the manually after the fact that prep time can fall from six hours to two and the

202

00:11:47,660 --> 00:11:52,860

bigger win is downstream escalations happen earlier stakeholders get updates that are ready to act on

203

00:11:52,860 --> 00:11:57,900

fewer decisions stall because the follow-up arrives late incomplete or unclear what changed

204

00:11:57,900 --> 00:12:02,460

wasn't the pm title it was the speed at which information became usable then there's support and

205

00:12:02,460 --> 00:12:07,660

operations where the math gets very visible very quickly start with a common case average handling

206

00:12:07,660 --> 00:12:12,380

time sits at 12 minutes because people search for the right answer right the response from scratch

207

00:12:12,380 --> 00:12:17,740

summarize the case and then move to the next one already behind after focused upskilling the agent

208

00:12:17,740 --> 00:12:22,540

uses a i for draft responses faster retrieval from approved knowledge and structured case summaries

209

00:12:22,540 --> 00:12:28,380

that reduce repeat searching handling time can drop from 12 minutes to seven that matters on

210

00:12:28,380 --> 00:12:33,100

its own but the stronger signal is service stability more cases get resolved inside target

211

00:12:33,100 --> 00:12:37,100

first response quality improves because the answer starts from a grounded draft instead of rushed

212

00:12:37,100 --> 00:12:42,220

manual writing and the team gets breathing room without adding headcount across all three cases the

213

00:12:42,220 --> 00:12:46,860

structure stays the same you start with a baseline task you train the behavior inside that task the

214

00:12:46,860 --> 00:12:51,500

workflow gets shorter then the freed capacity moves somewhere useful that repeatable structure matters

215

00:12:51,500 --> 00:12:55,900

because it keeps the business case honest you're not claiming magic you're showing where time went

216

00:12:55,900 --> 00:13:00,300

what changed and what the team did with the game the external benchmarks in your research back that

217

00:13:00,300 --> 00:13:05,420

pattern without replacing internal proof across Microsoft co-pilot studies uses report average

218

00:13:05,420 --> 00:13:10,620

monthly time savings and a large share report getting to a good first draft faster in measured deployments

219

00:13:10,620 --> 00:13:15,980

teams also report faster time to inside and better decision speed when a i is tied to a real workflow

220

00:13:15,980 --> 00:13:20,620

instead of floating as a general user assistant those benchmark signals are useful because they show

221

00:13:20,620 --> 00:13:24,700

your internal result isn't isolated but they should support the case not carry it and that's the

222

00:13:24,700 --> 00:13:29,740

discipline leaders need don't ask whether a i touch the process ask whether the process now moves with

223

00:13:29,740 --> 00:13:36,540

less delay less rework and better use of skilled people in each of these cases a i didn't remove the

224

00:13:36,540 --> 00:13:41,340

role it removed the lag between information arriving and action starting that's the upside but it

225

00:13:41,340 --> 00:13:46,380

only holds if the system around it is controlled because once outputs drift sources get messy

226

00:13:46,380 --> 00:13:51,580

or people start improvising in high risk workflows the apparent gain can vanish under hidden

227

00:13:51,580 --> 00:13:58,060

correction work the hard line governance that protects ROI now the part a lot of AI content

228

00:13:58,060 --> 00:14:02,300

skips because it sounds less exciting than productivity gains governance but if you leave this out your

229

00:14:02,300 --> 00:14:08,220

ROI model turns soft fast since bad outputs create hidden review work week decisions and risk nobody

230

00:14:08,220 --> 00:14:14,140

priced in at the start begin with the data boundary AI should work only with approved data sources

231

00:14:14,140 --> 00:14:19,820

under clear access rules with inputs people can trust if the sources stale messy or pulled from the

232

00:14:19,820 --> 00:14:24,460

wrong place the output may look polished while still pushing the team in the wrong direction that's

233

00:14:24,460 --> 00:14:30,300

expensive not always in a visible way but in second checks rework delay the approvals and lost confidence

234

00:14:30,300 --> 00:14:35,820

so the operating rule needs to stay simple control data in reviewable output out then keep the

235

00:14:35,820 --> 00:14:40,300

human in the loop where accountability actually sits AI can draft it can summarize it can suggest

236

00:14:40,300 --> 00:14:45,980

patterns but approvals exceptions and final decisions still belong to people that isn't caution

237

00:14:45,980 --> 00:14:49,980

for its own sake it's how you protect decision quality while still getting the speed benefit

238

00:14:49,980 --> 00:14:54,380

especially in finance project risk customer cases or anything with regulatory consequences

239

00:14:54,380 --> 00:14:59,020

somebody has to own the judgment not just the prompt this is where use case tiering helps

240

00:14:59,020 --> 00:15:04,780

low risk work scales first summaries draft emails meeting actions first pass commentary those are

241

00:15:04,780 --> 00:15:08,860

good places to build habits because the upside is easy to see on the downside is easy to catch

242

00:15:08,860 --> 00:15:13,740

then you move into monitored analysis support where AI helps structure thinking but doesn't close the

243

00:15:13,740 --> 00:15:18,380

loop alone high risk automation stays narrow and controlled because once the workflow carries

244

00:15:18,380 --> 00:15:23,340

financial legal or service risk the cost of a wrong output climbs fast without that tiering

245

00:15:23,340 --> 00:15:27,740

companies blur everything together they treat a meeting summary and a sensitive decision path as if

246

00:15:27,740 --> 00:15:32,620

they carry the same risk they don't and when people can't see the boundary they either overtrust

247

00:15:32,620 --> 00:15:37,580

the tool or avoid it completely both kill value standards matter to random prompting producers

248

00:15:37,580 --> 00:15:42,540

random work if every employee invents their own way of asking for summaries drafts and analyses

249

00:15:42,540 --> 00:15:47,740

quality spreads all over the place and manages end up reviewing style differences instead of business

250

00:15:47,740 --> 00:15:53,020

value shared prompt patterns role guidance and simple examples reduce that drift not because prompts

251

00:15:53,020 --> 00:15:57,660

are the strategy but because repeatable behavior lowers rework measurement also can't stop after

252

00:15:57,660 --> 00:16:03,020

rollout you need a live view of save time adoption depth exception rates rework and any drop in

253

00:16:03,020 --> 00:16:07,820

output quality otherwise the early gains can mask later decay a team may look faster on paper while

254

00:16:07,820 --> 00:16:12,700

correction work piles up somewhere else that's not ROI that's cost moved into another part of the

255

00:16:12,700 --> 00:16:17,340

system and this is the executive point governance doesn't slow value it protects value from being

256

00:16:17,340 --> 00:16:22,220

overstated leaders don't fund what feels clever they fund what they can audit compare and defend

257

00:16:22,220 --> 00:16:27,740

when results get questioned so if your AI program can show clean inputs clear ownership sensible tears

258

00:16:27,740 --> 00:16:31,900

and measured outcomes it stops looking like experimentation and starts looking like operational

259

00:16:31,900 --> 00:16:36,940

discipline once those controls are built into the model expanding AI use stops looking like a

260

00:16:36,940 --> 00:16:42,540

better enthusiasm and starts looking like a managed business choice scaling without waste how to

261

00:16:42,540 --> 00:16:48,380

expand from pilot to operating model after that the next mistake is scale too early a pilot chose

262

00:16:48,380 --> 00:16:53,340

promise leadership gets excited and then licenses spread across the company before anyone has locked

263

00:16:53,340 --> 00:16:57,900

the pattern that actually produced the result that's where money leaks not because the tool stopped

264

00:16:57,900 --> 00:17:02,780

working but because the operating model never formed around it a better path starts small and

265

00:17:02,780 --> 00:17:07,740

specific pick three to five workflows for each target role not 10 not every possible use case

266

00:17:07,740 --> 00:17:12,540

just the workflows with repeat volume visible friction and clear business relevance capture the

267

00:17:12,540 --> 00:17:16,860

baseline before anything changes then train people on the task itself not on a generic tour of

268

00:17:16,860 --> 00:17:21,580

features a finance analyst doesn't need a broad lesson in AI possibilities first they need to know

269

00:17:21,580 --> 00:17:26,540

how to shorten commentary drafting how to clean a recurring spreadsheet faster and how to structure

270

00:17:26,540 --> 00:17:31,500

a variance review with less back and forth then run short pilot cycles with hard success gates did

271

00:17:31,500 --> 00:17:36,380

reclaimed hours show up did cycle time drop did quality stay stable did trained users keep using the

272

00:17:36,380 --> 00:17:41,180

pattern after the first week those are the signals that matter if active usage falls away or if

273

00:17:41,180 --> 00:17:46,460

rework climbs don't expand yet fix the workflow the guidance or the manager support first grows

274

00:17:46,460 --> 00:17:51,500

without that discipline just scales confusion what helps here is building proof packs that speak the

275

00:17:51,500 --> 00:17:56,380

language of each stakeholder the cfo wants a cost of view of reclaimed labor and avoided waste the

276

00:17:56,380 --> 00:18:01,660

COO wants movement in cycle time throughput and service reliability line managers want to know

277

00:18:01,660 --> 00:18:06,860

whether the team can absorb more work respond faster or reduce manual admin so don't present broad

278

00:18:06,860 --> 00:18:11,260

vendor claims when you ask for the next phase show role level data from your own environment tied

279

00:18:11,260 --> 00:18:15,900

to one workflow at a time budget also needs to shift too much AI spend still goes into generic

280

00:18:15,900 --> 00:18:20,460

certification and one off training events that feel organized but don't stick the stronger model

281

00:18:20,460 --> 00:18:25,020

puts money into workflow embedded coaching shared prompt libraries and manager led reinforcement

282

00:18:25,020 --> 00:18:29,660

because those are the mechanisms that turn a useful demo into repeated behavior research in your

283

00:18:29,660 --> 00:18:34,620

source set points in that direction to high performing organizations spend more on internal program

284

00:18:34,620 --> 00:18:39,500

design and manager support and less on external certification as the main answer expansion should

285

00:18:39,500 --> 00:18:43,980

follow role clusters and use case tiers if one finance team proves value in reporting and

286

00:18:43,980 --> 00:18:49,180

reconciliation support move to adjacent finance workflows if pms show gains in status reporting and

287

00:18:49,180 --> 00:18:53,980

action tracking move into related coordination patterns that kind of scaling holds shape blanket

288

00:18:53,980 --> 00:18:59,180

licensing rarely does because usage spreads faster than capability and keep one question in front of

289

00:18:59,180 --> 00:19:04,540

every rollout decision did this change how work moves if the answer is no pause because profit

290

00:19:04,540 --> 00:19:09,260

doesn't come from adding AI access it comes from changing the path from input to action in a way

291

00:19:09,260 --> 00:19:14,300

the business can measure so the shift is simple ROI doesn't come from training volume it comes from

292

00:19:14,300 --> 00:19:20,060

shorter tasks faster decisions and cleaner execution inside the work people already do pick one

293

00:19:20,060 --> 00:19:24,700

workflow this week in finance project management or support measure the manual version against the AI

294

00:19:24,700 --> 00:19:30,460

assisted version after targeted upscaling and watch whether recovered capacity goes if this changed

295

00:19:30,460 --> 00:19:36,780

how you think about Microsoft AI subscribe to the m365fm podcast leave a review connect with me

296

00:19:36,780 --> 00:19:41,580

Miracopeters, on LinkedIn and send me the next workflow bottleneck you want unpacked.

Founder of m365.fm, m365.show and m365con.net

Mirko Peters is a Microsoft 365 expert, content creator, and founder of m365.fm, a platform dedicated to sharing practical insights on modern workplace technologies. His work focuses on Microsoft 365 governance, security, collaboration, and real-world implementation strategies.

Through his podcast and written content, Mirko provides hands-on guidance for IT professionals, architects, and business leaders navigating the complexities of Microsoft 365. He is known for translating complex topics into clear, actionable advice, often highlighting common mistakes and overlooked risks in real-world environments.

With a strong emphasis on community contribution and knowledge sharing, Mirko is actively building a platform that connects experts, shares experiences, and helps organizations get the most out of their Microsoft 365 investments.