How to Fix DLP False Positives: Practical Strategies for Microsoft Environments

DLP (Data Loss Prevention) is supposed to protect your sensitive information, not slow down everyone with alerts about everyday business. Yet, in Microsoft environments, it’s all too common for DLP to flood teams with false positives—flagging safe actions as risky and turning security from a silent guardian to everyone’s least favorite IT roadblock.

This guide gets straight to the heart of why DLP false positives happen, why they’re a big deal, and how to dial down the noise without leaving your organization open to real threats. You’ll get a hands-on blueprint to uncover root causes, rework outdated policies, tune your DLP for precision, and embrace smarter automation. When you approach DLP tuning systematically and with the right tools, you can boost both your security posture and your overall workflow efficiency—while rebuilding trust with frustrated users along the way.

Understanding False Positives in DLP and Their Consequences

Let’s get real about DLP false positives: they’re not just a technical inconvenience, they’re a business headache. When your DLP system misidentifies harmless activity as a possible data leak, productivity takes a hit, end-users lose patience, and your security team spends more time firefighting false alarms than stopping real threats. These mistakes aren’t rare flukes—they’re woven into the way many data loss prevention systems function, especially when policies are imprecise or the environment keeps changing.

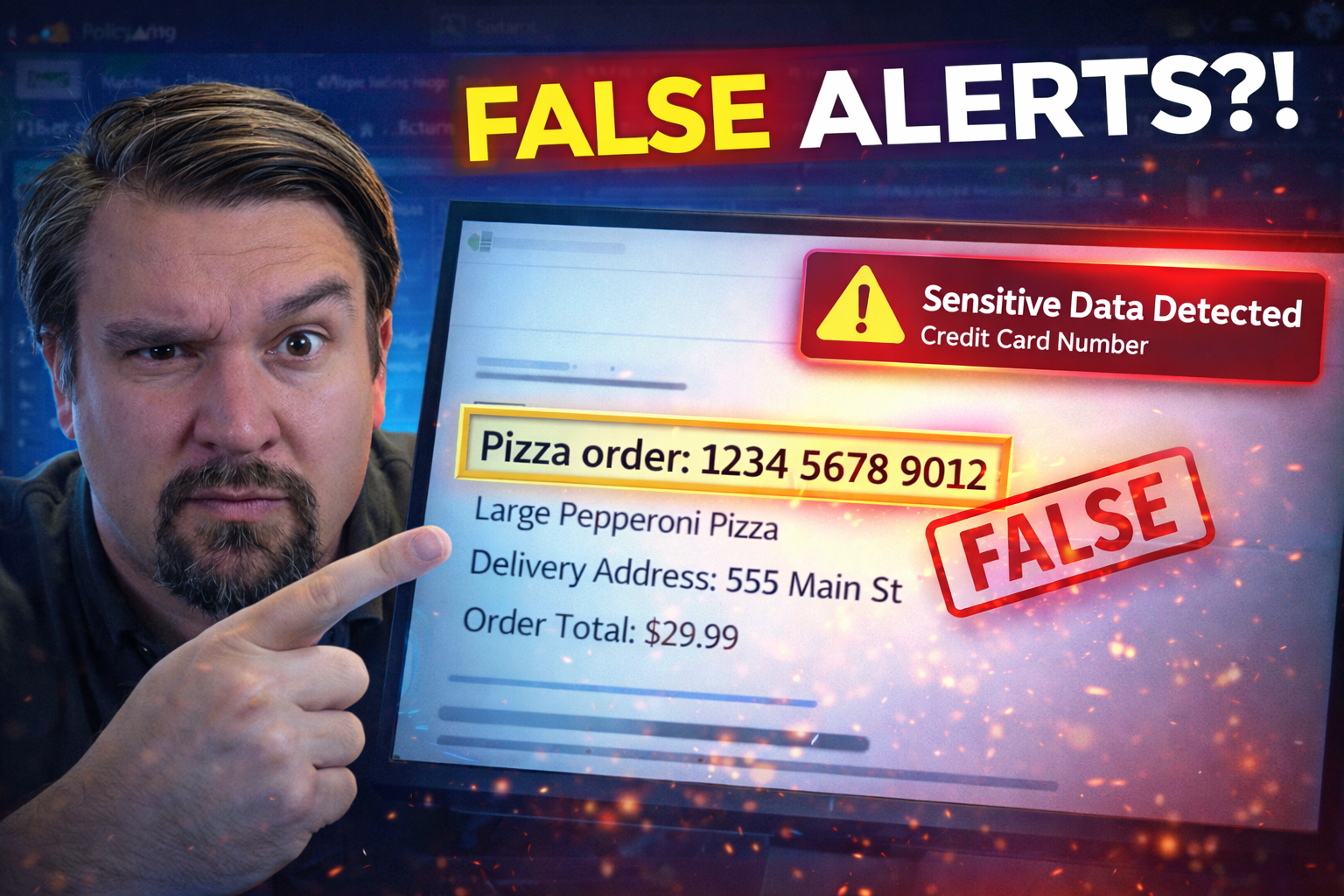

False positives can show up in all kinds of ways. Maybe it’s a DLP policy flagging an HR file as confidential when it’s actually a public holiday schedule. Or perhaps your finance team gets blocked from sharing a legitimate budget doc because the system mistakes it for sensitive payment info. These patterns are easy to ignore at first, but as volumes climb, negative effects build fast: operational slowdowns, alert fatigue, and genuine threats slipping under the radar.

Understanding the scope and impact of these errors is key for IT leaders and security analysts alike. It’s not enough to just “tweak” a setting when people complain. You need to look at false positives as a strategic challenge—a signal that something in your DLP setup or business process needs a closer look. By examining why excessive false positives matter, you’ll see how they directly threaten the credibility, efficiency, and effectiveness of your overall security program. Next, we’ll break down the real-world damage and practical risks that arise when your DLP goes haywire with false alarms.

Why Excessive False Positives DLP Threaten Security Operations

- Alert Fatigue for Security Teams: Security analysts can quickly get overwhelmed by a nonstop stream of DLP alerts. When false positives outnumber actual threats, teams become desensitized, leading to real risks being scanned over or missed entirely.

- Resource Drain and Productivity Loss: Investigating every DLP alert eats up time and resources that could be spent on higher-value security work. Employees dealing with repeated blocks also lose productivity as they wait for IT approval.

- Missed Genuine Threats: With so much noise, true data leaks can slip by unnoticed. Research shows that high false positive rates lower actual detection accuracy, putting real sensitive information at risk.

- Erosion of Employee Trust: Users lose faith in security tools that block their legitimate work. Over time, this damages your security culture and makes staff less likely to support or report real issues.

- Compliance and Reputation Risks: A system flooded with false positives might cause compliance failures if real leaks are missed, leading to possible fines or brand damage.

Uncovering Root Causes: Why DLP Systems Generate False Positives

If you’ve ever wondered why your DLP solution seems to catch more good guys than bad guys, you’re not alone. The reality is, false positives usually trace back to a handful of technical or strategic mistakes—some hiding in your data, others in the way you write your policies. Errors might stem from automated pattern-matching gone wild, poorly classified data, or DLP policies so broad they’d flag a grocery list as confidential.

Legacy systems don’t help matters. The older your DLP, the less likely it is to understand business context or adapt to the nuances of cloud storage, modern collaboration tools, and remote workflows. Even with the latest systems, rushing policy deployment or missing steps in data discovery means you’re setting yourself up for trouble before you start.

To get DLP right, you have to look under the hood—start by understanding where these false positive triggers come from. In the sections ahead, we’ll drill into problems like insufficient data classification and blanket policy logic that treats every document or activity the same. Pinpointing these sources is the foundation for cutting DLP noise and building more intelligent protection down the road.

Poor Data Classification and Overly Broad DLP Policies Explained

- Inadequate Data Classification: If your DLP doesn’t know what counts as sensitive and what doesn’t, it guesses. That leads to alerts for routine files—think meeting notes tagged as confidential or regular HR emails flagged for “PII.”

- Generic Pattern and Keyword Matching: Policies relying on simple matches, like credit card or social security number patterns, often mistake harmless numbers (invoice IDs, phone numbers) for restricted data. This triggers alerts for non-sensitive activity.

- Overly Broad Policy Scopes: When DLP rules are too general, covering all file types or all communication channels, they flood security teams with irrelevant hits. For instance, a policy blocking any document with a “client name” reference ends up snaring everyday collaboration.

- Lack of Context Awareness: DLP that doesn’t consider who is sending, receiving, or modifying the data misses the difference between a routine workflow and actual risky behavior. A document sharing to a colleague can look just like an external leak in the absence of business context.

- Undefined Sensitive Data Types: Without precise definitions, your DLP might treat every spreadsheet or PDF with numbers as protected. This ballooning of “what counts” creates a noise problem that only gets worse as your data estate grows.

If you’re stuck here, consider reviewing your classification schemes, sensitivity labels, and policy granularity. Microsoft Power Platform users in particular can benefit from proactive testing and tight connector governance—like the best practices covered in this guide. For a deeper dive on balancing flexibility, environment strategy, and real-life risk, you might also check out this podcast episode, which explains why the “default environment” is often the hidden danger.

Legacy DLP Systems Versus Modern, Context-Aware Solutions

If you’re still running a legacy DLP system, you probably see the same noisy alerts cycle repeating itself. These older platforms were built in an era before cloud, hybrid work, and bring-your-own-app were even a twinkle in IT’s eye. They take a rigid, “one size fits all” approach—enforcing static policies without any real sense of business context or user behavior.

Legacy DLP gets tripped up by simple stuff. It can’t tell the difference between a payroll file being emailed for review versus actual data exfiltration. This leads to more false positives and a lot of wasted effort. There’s little room for nuance: broad guards apply equally to every user, every channel, and every file, no matter the intent or risk level.

In contrast, modern DLP solutions—especially those integrated with Microsoft 365 and cloud-native platforms—bring in real intelligence. They analyze who is sending data, what information is being shared, where it’s going, and how it’s being accessed. This context-awareness slashes alert noise, hones detection accuracy, and builds trust within the business.

Want a sense of what’s possible with adaptive, cloud-aware DLP in Microsoft environments? Check out this podcast episode for step-by-step setup and a look at productivity gains through automation, or tune in to this discussion for advanced governance tricks that avoid the classic pitfalls of legacy DLP systems.

A Two-Phase Blueprint for Strategic DLP Tuning

Ready to get your DLP system out of firefighting mode and into proactive protection? The key is to follow a simple, two-phase approach: first, assess, then adapt. Think of phase one as planning and discovery—where you hunt down what data is most sensitive, review policies with a fine-tooth comb, and get buy-in from business owners, legal, and IT stakeholders. It’s not about guessing; it’s about surfacing where things break, what’s causing friction, and how alerts impact daily business.

Once you’ve got a clear map, phase two is all about adjusting your policies and incident response. This doesn’t mean swinging from “block everything” to “allow it all.” Instead, you shift to smarter enforcement: rely more on user education, flexible notification, and even conditional approvals for borderline cases. Adjust your detection rules to consider context—like sharing within teams versus to external parties—and gradually raise the bar for what counts as truly risky.

This structured approach allows you to shrink alert volume, reduce user frustration, and still protect what matters most. For Power Platform developers, adopting regular testing and connector classification strategies—like those shared in this Microsoft-focused guide—can smooth migrations and avoid hidden roadblocks. The whole point is to move from reactive DLP to intelligent, business-aligned prevention that stands up to modern threats.

Maintaining Long-Term Success: Keeping False Positives Low

Fixing DLP isn’t a “set it and forget it” move. Organizations that keep false positives low over time treat DLP policy as a living thing—one that needs ongoing attention, tuning, and feedback from both users and security teams. That starts with regular review cycles: pulling alert reports, tracking false positive rates, and making incremental changes instead of sweeping, risky overhauls.

User feedback is your secret weapon. When employees have a clear way to report false blocks or questionable alerts, you gain a real-world understanding of where your DLP policies go off-base. Incorporating this feedback loop helps adjust sensitivity so that business-critical work isn’t caught in the crossfire. It also rebuilds trust; when users see their pain points addressed, security stops being “the bad guy.”

The most effective DLP programs eventually move from gatekeepers to enablers. That means going beyond blocking bad behavior—to actively supporting secure, compliant workflows that keep business humming. When DLP adapts to organizational changes and regulatory shifts, you end up with a security posture that’s more collaborative, less combative, and truly sustainable.

For insight into how policies, compliance, and real-world document chaos are managed at scale, the strategies in this Microsoft Purview and SharePoint podcast showcase how HR, legal, and IT teams can work together to avoid regulatory headaches and preserve sensitive info while keeping DLP lean and effective.

Overcoming False Positives: Using Machine Learning and Automation

- Machine Learning Models for Contextual Analysis: Modern DLP uses AI to spot patterns in data access, sharing, and user behavior. These models learn what “normal” looks like—so they can tell when a file move or email is routine versus a potential leak, bringing down the false positive rate.

- Automated, Behavior-Driven Response: Automation tools triage and respond to alerts based on severity and risk. For example, they can temporarily pause a suspected data transfer and alert a human reviewer when there’s uncertainty, rather than auto-blocking everything and causing work stoppages.

- User and Entity Behavior Analytics (UEBA): By analyzing distinct user patterns—like who accesses what when—DLP systems can apply stricter controls only when someone acts out of character. This approach makes day-to-day operations smoother while staying sharp for real threats.

- Adapting to Cloud and Hybrid Workflows: Effective DLP must keep up with the cloud: data sitting in Microsoft 365, being shared in Teams, or accessed through Power Platform connectors. ML-driven solutions adapt policies automatically as workflows change, closing security gaps without drowning you in new alerts.

- Best Practices for Microsoft 365 Environments: Success comes from thoughtful governance—like strict connector policy boundaries, role-based access, and connector-blocking recommended in this advanced Microsoft Purview governance resource. For practical environment and connector tips, tune into this episode for actionable moves that blend automation with hands-on oversight.

Frequently Asked Questions About Fixing a DLP System Haywire with False Positives

- What causes most DLP false positives? The leading causes are vague data classification, overly broad policy rules, and ignoring business context. Bad pattern-matching and legacy tech make the problem worse.

- How do I fix false positives in Microsoft-based DLP? Start by reviewing and refining your classification labels and policy definitions. Test rules on live data, involve business owners in the review, and phase in changes instead of one big switch.

- Can false positives be tracked or benchmarked? Yes. Leading teams set KPIs like false positive rate, precision, and mean time to resolve. Track these over time to show improvements or spot backsliding.

- What’s an acceptable false positive rate? There’s no universal answer. Industry standards vary by sector, data type, and business needs—but aiming for double-digit reduction is realistic. Frequent reviews help maintain acceptable thresholds.

- Where can I learn more or get step-by-step setup help? Microsoft-focused guides like this episode offer practical instructions for setup, tuning, and incident response best practices across Microsoft 365, Power Platform, and cloud environments.