In this episode of M365.fm

, the discussion centers on why traditional manual sensitivity labeling in Microsoft Purview is rapidly becoming obsolete in modern enterprise environments.

The core argument is that organizations now generate far too much data, too quickly, for employees to reliably classify information by hand. Manual tagging depends on users consistently stopping their work to apply the correct sensitivity label — something that rarely happens in practice. According to the episode, many organizations see labeling adoption rates around 30%, leaving large amounts of sensitive intellectual property effectively invisible to governance, compliance, and Data Loss Prevention systems.

The episode explains that older governance models were designed for a slower workplace with fewer collaboration tools and lower data velocity. Today’s environments — driven by Microsoft 365, Teams, SharePoint, OneDrive, Slack, and Copilot — overwhelm users with constant communication and AI-generated content. In this reality, governance systems based on human behavior inevitably fail at scale.

A major focus is the limitation of regex and keyword-based classification systems. While pattern matching can detect things like credit card numbers or IDs, it cannot understand business context or semantic meaning. The podcast argues that modern governance requires AI systems capable of understanding intent, relationships, and document context rather than simple pattern detection.

The episode then explores the future of Microsoft Purview: autonomous AI-driven classification. Instead of relying on employees to manually select labels, Large Language Models (LLMs) can analyze content in real time and automatically apply appropriate sensitivity labels behind the scenes. This approach reduces human error, improves consistency, and allows governance to become “invisible infrastructure” rather than a productivity interruption.

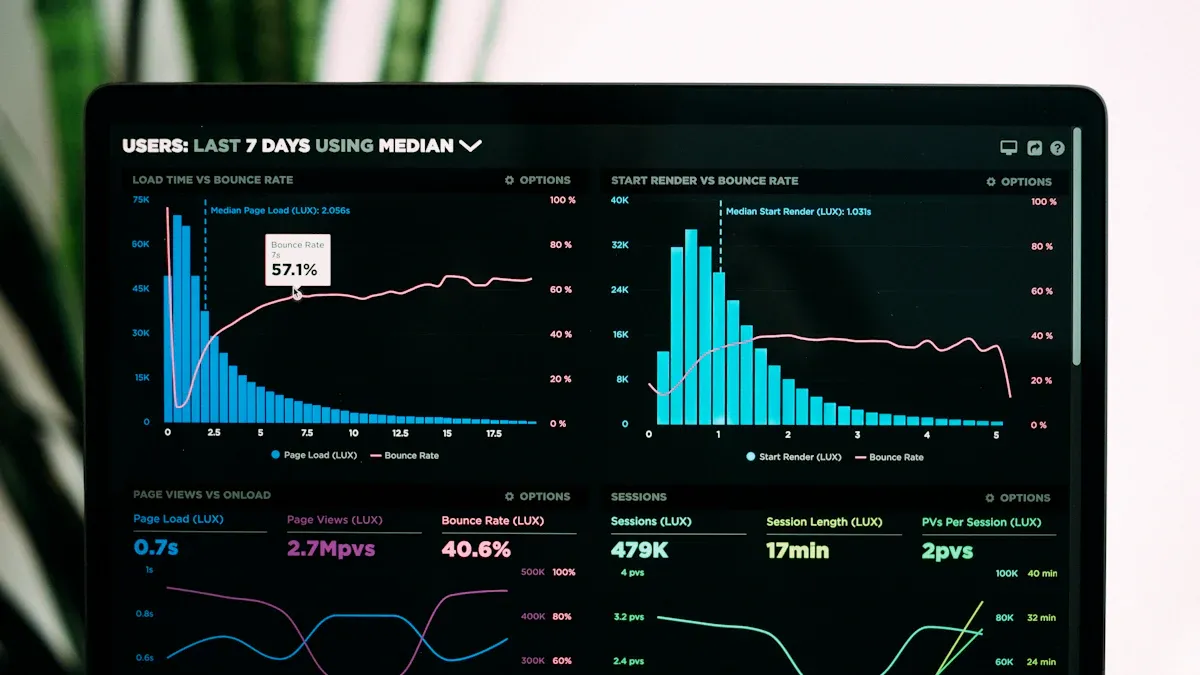

Manual tagging is obsolete as real-time ai transforms data analysis and security. You face massive data growth, with unstructured data rising 55-65% each year. Real-time ai in microsoft Purview automates classification, intercepts files, and applies sensitivity labels instantly. You no longer depend on manual tagging, which only covers 30% of intellectual property and leaves security gaps.

When you create or upload a file, real-time ai performs high-speed analysis, mapping content to security labels without your intervention.

| Impact | Benefit |

|---|---|

| Real-time ai applies classification by default | You save time and reduce manual analysis |

| Purview’s ai scales data protection | Security oversight becomes smarter and faster |

Key Takeaways

- Real-time AI in Microsoft Purview automates data classification, saving you time and reducing manual work.

- Manual tagging is outdated and only covers a small portion of data, leaving security gaps that can be exploited.

- AI-driven governance helps you manage large amounts of unstructured data effectively, ensuring sensitive information is protected.

- With real-time classification, your data receives security labels instantly, reducing the risk of leaks and compliance failures.

- AI improves classification accuracy by up to 40%, making it easier to identify and protect sensitive information.

- Automated compliance assessments in Purview help you stay aligned with regulations, reducing the risk of penalties.

- The Guardian Agent feature ensures your data is protected immediately, closing security gaps that traditional systems leave open.

- AI-driven alert triage prioritizes critical threats, allowing you to focus on the most important data security issues.

The Collapse of Manual Tagging

Productivity Friction

Manual tagging slows you down. In a modern workplace, you handle more files, messages, and documents than ever before. You cannot afford to stop and classify every piece of data. This creates friction that hurts your productivity and your organization’s bottom line.

User Overload

You face constant notifications, meetings, and collaboration requests. Most employees do not have time to act as data librarians. When you must tag every document, you lose focus on your real work.

- Knowledge workers spend about 3.6 hours each day searching for information.

- Manual tagging and maintenance waste valuable time.

- Large organizations lose millions in productivity because of these slow processes.

You want to move fast, but manual governance systems force you to choose between speed and proper data risk management.

Labeling Adoption Rates

Manual tagging depends on you and your coworkers to label every file correctly. In reality, most people skip this step. Adoption rates for manual labeling often stay around thirty percent. This means most data remains unclassified and invisible to security controls. You cannot protect what you cannot see. As a result, your organization faces increased data risk and weak governance.

Security Blind Spots

Manual tagging creates dangerous gaps in your security posture. When you miss a label, you leave sensitive data exposed. Attackers look for these gaps, and so do auditors.

Unlabeled Data Risks

Unlabeled data is a major data risk. You may not know who owns a file or where it lives. Public-facing resources without proper tags can go unnoticed until someone exploits them. Security teams cannot enforce access policies without reliable metadata. Untagged resources become entry points for attackers.

| Security Blind Spot | Description |

|---|---|

| Inability to identify resource ownership | Resources without tags lead to a lack of clarity on who owns them, creating management challenges. |

| Compliance gaps | Missing tags can result in non-compliance with organizational policies, exposing vulnerabilities. |

| Untracked resources | Resources that are not tagged may become unnoticed entry points for attackers, increasing risk. |

Compliance Gaps

You must meet strict compliance standards. Manual tagging makes this difficult. Missing labels lead to compliance failures and audit findings. You cannot prove proper governance if you cannot show how you manage sensitive data. This exposes your organization to regulatory penalties and more data risk.

Manual tagging is not just a productivity issue. It is a core governance and security problem that puts your data at risk.

The Limits of Regex and Keyword Matching

Pattern Recognition vs. Semantic Understanding

You may rely on regex and keyword matching to classify sensitive information. These tools scan for patterns, such as credit card numbers or social security identifiers. They operate quickly, but they lack the ability to understand the meaning behind your data. Regex engines only see the surface. They cannot tell if a document contains confidential business plans or public training materials.

Regex and keyword matching focus on patterns, not context. You need more than pattern recognition to protect your data.

False Positives and Negatives

When you use regex and keyword matching, you face two main risks: false positives and false negatives. A false positive occurs when the system flags harmless content as sensitive. A false negative happens when the system misses actual sensitive information.

- Regex patterns require careful tuning. If you make them too broad, you classify too many files. If you make them too narrow, you miss important data.

- Sensitive information types (SITs) need precise thresholds. Without them, you create excessive classification traffic.

- Regex matching works on extracted text, not the original document. This can lead to mismatches and missed context.

You can use tools like the Test-TextExtraction cmdlet to see how extracted text streams look. Matching happens on these streams, not on the original file. This process increases the chance of errors.

Unstructured Data Challenges

Unstructured data grows rapidly in your organization. You store emails, chat messages, documents, and images across platforms. Traditional methods struggle to classify this data because it lacks a standardized format.

| Challenge Type | Description |

|---|---|

| Volume | Massive amounts of unstructured data can overwhelm your resources. |

| Lack of Standardized Format | Without consistent structure, you cannot apply uniform security measures. |

| Identification and Categorization | Identifying and classifying sensitive unstructured data requires significant manual effort. |

Intellectual Property Risks

Your intellectual property often lives in unstructured formats. You may have confidential designs, business strategies, or research notes scattered across emails and shared drives. Regex and keyword matching cannot reliably find or protect this information. They miss context and intent, leaving your most valuable assets exposed.

Unstructured data hides sensitive information. Regex and keyword matching cannot keep up with the complexity and volume.

You need smarter tools that understand the meaning and relationships within your data. Real-time AI in Microsoft Purview offers semantic analysis, helping you protect intellectual property and sensitive information more effectively.

The Rise of AI-Driven Governance

You now live in a world where ai transforms how you manage data. The old way of relying on users to tag files is gone. Today, ai-driven governance gives you a smarter, faster, and more reliable way to protect your information. Microsoft purview uses advanced inference engines to automate classification and security, so you do not have to worry about missing a step.

Autonomous Classification

Large Language Models

Large language models power the new era of data inference. These models scan and interpret huge amounts of data in seconds. You benefit from their ability to identify, classify, and protect information at scale. Microsoft purview uses inference engines trained on diverse business data, which means you get accurate results even with complex or unstructured files. These classifiers run on microsoft’s ai supercomputer, giving you speed and scale that manual processes cannot match.

- ai can automatically scan and interpret large datasets to find sensitive or regulated information.

- It reduces your effort in data classification by up to 80%.

- ai improves classification accuracy by 25-40% compared to manual tagging.

Semantic Context

You need more than pattern matching. Semantic inference lets ai understand the meaning and context of your data. Microsoft purview uses inference to discover dark data and categorize it based on real context, not just metadata. This dynamic approach means your classifications stay up to date as your data changes. You get continuous compliance and protection for your sensitive information.

- ai uses natural language processing and computer vision to scan, classify, and tag data automatically.

- This is crucial for managing large amounts of structured and unstructured data across many systems.

AI in Microsoft Purview

Integration with Copilot and AI Tools

Microsoft purview integrates with copilot and other ai tools to bring inference directly into your workflow. You see automated classification and security controls applied the moment you create or share a file. This integration transforms governance from a slow, manual process into an active, system-driven solution. You no longer need to worry about missing labels or delayed protection.

| Feature | Impact on Data Governance |

|---|---|

| Automated Classification | Enhances compliance and reduces administrative burdens by automatically tagging sensitive information. |

| Intelligent Data Discovery | Improves data visibility and understanding, enabling organizations to leverage data more effectively. |

| Proactive Risk Detection | Identifies potential compliance issues and anomalies, allowing for timely intervention and risk management. |

Automated Compliance Assessments

You must keep up with changing regulations. Microsoft purview uses inference to automate compliance assessments. The system adapts policies and controls as your business and regulatory needs evolve. You get enterprise-grade security across every layer of the ai stack, with confidence that your data stays protected.

- Adaptive policy orchestration aligns controls with business and regulatory requirements.

- Proactive risk detection helps you manage threats before they become problems.

By embedding ai into data governance, you move from reactive compliance to proactive protection. Microsoft purview empowers you to manage data, security, and governance with speed and intelligence.

Real-Time AI in Purview

Instant Data Classification

You experience a new level of speed and accuracy with real-time classification in Microsoft Purview. When you create or upload a file, AI scans your data instantly. You do not wait for manual review or delayed tagging. Microsoft Purview uses advanced models to analyze content and apply security labels right away. This process protects your data before anyone else can access it.

Time to First Token (TTFT)

Time to First Token measures how quickly AI starts analyzing your data. Microsoft Purview delivers results in milliseconds. You see protection applied almost as soon as you save or share a file. This rapid response reduces the risk of leaks and mistakes.

- Tuned models achieve over 95% precision for Tier 0 data.

- Recall stays above 90%, so you catch most sensitive files.

- Companies aim for a false-negative rate of less than 20% and a false-positive rate below 10%.

- Traditional workflows take about 6 minutes per file for manual annotation.

- Active-learning pipelines in Microsoft Purview cut this time dramatically.

You gain confidence that your data receives the right label quickly. You do not rely on slow manual processes.

Guardian Agent Concept

Microsoft Purview introduces the Guardian Agent. This agent acts as a real-time proxy for governance. It checks your data at the edge, before backend systems finish syncing. You get instant policy enforcement. The Guardian Agent applies rules and labels right away, so your files stay protected from the moment you create them.

Tip: Guardian Agents help you avoid gaps in security. They make sure your data never sits unprotected while waiting for backend updates.

Closing the Latency Gap

You face a challenge with traditional labeling systems. They often work asynchronously. This means your data can sit unprotected for minutes or even hours. Microsoft Purview solves this problem with real-time classification. You see security applied instantly, not after a delay.

Asynchronous vs. Real-Time Labeling

Asynchronous labeling creates a lag between when you request a label and when it actually gets applied. Real-time classification in Microsoft Purview eliminates this lag. You do not drop a file into a mailbox and hope it gets labeled soon. AI acts immediately, so your data stays safe.

| Labeling Type | Speed | Risk of Exposure | User Experience |

|---|---|---|---|

| Asynchronous | Minutes to hours | High | Uncertain, delayed |

| Real-Time | Milliseconds | Low | Instant, reliable |

You benefit from instant feedback and protection. Microsoft Purview keeps your data secure without waiting.

Exposure Windows

When you send a request to apply a sensitivity label, you aren't getting an instant confirmation that the file is protected. You are essentially dropping a letter in a mailbox and hoping it gets delivered soon. In real-world enterprise environments, that propagation can take anywhere from a few minutes to a full 24 hours. This creates what I call the vulnerability window. It is a period where sensitive data exists in your environment, but it is effectively naked. It has no label, no encryption, and no access restrictions.

Real-time classification in Microsoft Purview closes this exposure window. You do not leave your data unprotected. AI applies labels and policies right away. You reduce the risk of leaks and compliance failures. Microsoft Purview gives you peace of mind that your files stay secure from the start.

Note: Real-time classification protects your data at the speed of creation. You do not wait for security to catch up.

Data Security Investigations with Purview

When you manage data security investigations, you need tools that help you act fast and make smart decisions. Microsoft Purview gives you advanced AI-driven features that transform how you handle investigations. You can now spot sensitive data risks, respond to incidents, and prevent exfiltration with greater accuracy.

AI-Driven Alert Triage

You face hundreds of alerts every day. Not all of them matter. AI-driven alert triage in Purview helps you focus on what is important during investigations. The system uses a managed alert queue to identify and prioritize the highest risk activities. You do not waste time on low-priority notifications. Instead, you can direct your investigation efforts toward the most critical threats to sensitive data.

| Feature | Benefit |

|---|---|

| Managed Alert Queue | Identifies and prioritizes the highest risk activities, improving focus on critical alerts. |

| Content Analysis | Analyzes activity content and intent based on organizational parameters. |

| Time Reduction | Reduces time required for triaging alerts, enhancing overall efficiency. |

| Prioritization | Helps sift through lower risk alerts to focus on the most important ones. |

| Comprehensive Explanations | Provides clear reasoning for alert categorization, aiding in understanding and decision-making. |

AI-driven triage integrates insights from data classification and user activity. This approach reduces false alerts and improves your ability to contain data leaks. You also get clear explanations for why an alert matters, which helps you make better investigation decisions. When you investigate insider threats or exfiltration attempts, Purview correlates alerts with incidents, so you can distinguish between real risks and noise.

Prioritizing Risks

You need to know which investigations require immediate action. Purview’s alert triage helps you prioritize risks by analyzing the context of each event. You see which users accessed sensitive information, how they interacted with it, and whether exfiltration occurred. This context lets you focus your investigation on the most urgent data security posture issues. You can stop data leaks before they escalate.

- Integrates data classification and user activity for better investigations.

- Reduces false positives, so you spend less time on unnecessary investigation steps.

- Correlates insider risk alerts with incidents for more accurate investigation outcomes.

Unified Data Security

You want a single view of your data security posture. Purview brings together Data Loss Prevention, Insider Risk Management, and data security posture management. This unified approach streamlines your investigations and reduces the risk of missing sensitive data events.

| Feature | Benefit |

|---|---|

| Data Loss Prevention (DLP) | Helps prevent data breaches by monitoring and controlling data sharing and usage. |

| Insider Risk Management (IRM) | Identifies and mitigates risks posed by insider threats, enhancing overall security posture. |

| Data Security Posture Management (DSPM) | Provides a comprehensive view of data security, allowing for proactive risk management. |

You benefit from automated workflows that guide you through each investigation step. When you detect a data leak or exfiltration, Purview’s workflows help you assess the impact, contain the incident, and document your actions. You can see how sensitive data moved, who accessed it, and what policies applied. This visibility improves your data security posture and supports compliance.

Automated Workflows

Automated workflows in Purview make your investigations faster and more reliable. You do not need to switch between tools or guess what to do next. The system integrates data classification, access controls, and user activity into your investigation process. This integration helps you reduce false alerts and enhances your ability to contain sensitive data incidents.

- Reduction in risky events over time shows the effectiveness of unified data security.

- You can assess the impact of incidents quickly with integrated insights.

- Automated workflows ensure you follow best practices during every investigation.

Tip: Use Purview’s unified data security features to simplify your investigations and strengthen your data security posture.

AI Use Cases in Modern Workplaces

Financial Services

You work in an industry where regulations change quickly and the stakes are high. Financial services organizations must protect customer data, monitor transactions, and meet strict compliance standards. AI helps you manage these challenges with speed and accuracy.

Regulatory Compliance

AI transforms how you handle compliance. You can automate regulatory reporting, reduce manual errors, and keep up with new rules. For example, JPMorgan Chase uses an AI tool called COIN to automate legal and compliance checks. This tool saves 360,000 hours of manual work each year and improves accuracy by 30%. You can also use AI to monitor transactions in real time, detect fraud, and protect customer data.

| Use Case | Description |

|---|---|

| Real-time transaction monitoring | AI analyzes financial transactions to detect and prevent fraud in real time. |

| Automated regulatory reporting | AI automates the generation of compliance documentation, reducing manual effort and errors. |

| Customer data protection | AI enhances privacy management by safeguarding sensitive customer information. |

| Audit trail maintenance | AI maintains comprehensive records of transactions and alerts for suspicious activities. |

You see how AI-driven governance in Microsoft Purview supports your compliance goals and keeps your organization secure.

Healthcare

You handle sensitive patient data every day. Protecting this information is your top priority. AI helps you identify risks, anonymize records, and maintain compliance with healthcare regulations.

Patient Data Protection

You can use AI to scan millions of records for security risks. IBM Watson for Healthcare Compliance, for example, performs over one million compliance checks daily. This helps you meet HIPAA requirements and avoid costly violations. At Mayo Clinic, AI anonymizes patient data by transforming 18 HIPAA identifiers. This process protects privacy while keeping clinical data useful for research.

- AI helps you identify and manage potential data security risks.

- You can anonymize patient records without losing important information.

- Strong data governance with Microsoft Purview ensures you use AI responsibly and meet compliance standards.

Note: Responsible AI use in healthcare improves patient trust and supports better outcomes.

Collaboration Platforms

You use platforms like Teams, SharePoint, and Slack to share information and work with others. These tools generate large amounts of data every day. AI-driven governance helps you manage this data and keep it secure.

Teams, SharePoint, Slack

AI features in Microsoft Teams and Slack boost your productivity. You can see a 15-20% increase in efficiency when you use AI-powered tools. These platforms help you organize conversations, manage workflows, and protect sensitive information. Most businesses plan to add AI-powered communication tools soon. The demand for chatbots and virtual assistants is growing fast.

- AI-integrated platforms improve communication and workflow management.

- Microsoft Purview applies real-time data classification and compliance controls across Teams, SharePoint, and Slack.

- You keep your data secure while working faster and smarter.

Tip: Use AI-driven governance in Microsoft Purview to protect your data and support compliance across all your collaboration tools.

Challenges and Considerations

Licensing and Costs

You need to understand how licensing affects your use of microsoft purview. Advanced features for real-time AI and data classification often require higher licensing tiers. If you mix licensing levels across your organization, you may see uneven coverage in investigations. This can lead to budget surprises when you need emergency upgrades. Hidden costs may appear if you assume all features are available in a unified interface. Clear licensing alignment helps you avoid risks before you start a data investigation.

- Licensing tiers determine which governance features you can use.

- Mixed tiers may cause gaps in data coverage and increase costs.

- Emergency upgrades can stretch your budget.

- Workflow redesigns may extend case timelines if features are missing.

- Consistent licensing ensures defensible governance and complete data collection.

Tip: Review your licensing model before deploying microsoft purview to avoid unexpected costs and coverage gaps.

Purview Licensing Models

You can choose from several purview licensing models. Each model offers different levels of governance and data protection. Make sure your licensing matches your needs for real-time AI and compliance. If you plan to expand your data governance, check that your model supports advanced features.

Implementation and Change Management

You face several challenges when you implement AI-driven governance. Migrating to microsoft purview requires careful planning and training. You must address privacy concerns, integrate with legacy systems, and manage resistance to change.

| Challenge | Solution | Implementation tip |

|---|---|---|

| Data privacy concerns | Use privacy techniques like federated learning | Start with non-sensitive data to build trust |

| Integration with legacy systems | Use API-based middleware and phased migration | Bridge modern AI with existing infrastructure |

| AI bias and fairness | Conduct regular bias audits | Set up feedback loops to detect and correct bias |

| Change management resistance | Show quick wins and provide training | Begin with pilot projects to build buy-in |

| Skills gap | Partner with vendors and invest in upskilling | Combine external expertise with internal knowledge |

Note: Begin your migration with pilot projects. This helps your team see immediate value and builds support for new governance processes.

Migration and Training

You need to train your staff on new governance tools and workflows. Upskilling programs and partnerships with AI vendors help close the skills gap. Combine internal knowledge with external expertise for a smooth transition. Start with non-sensitive data to build trust in AI-driven governance before expanding to more critical data.

AI Transparency and Privacy

You must ensure transparency and privacy in your AI-powered governance systems. Explainable AI models help you understand how decisions are made. Comprehensive documentation and clear communication with stakeholders build trust. If your AI acts as a "black box," you risk losing stakeholder confidence. Privacy concerns may arise if AI monitoring feels like surveillance.

| Best Practice | Description |

|---|---|

| Explainable AI Models | AI provides clear explanations for decisions. |

| Documentation | Maintain comprehensive documentation. |

| Stakeholder Communication | Ensure accessible explanations. |

- Explainable AI makes governance decisions clear and interpretable.

- Documentation supports defensible data governance.

- Accessible explanations help you verify alignment with business goals and fairness.

Alert: Lack of explainability and privacy safeguards can undermine trust and compliance. Make transparency a priority in your governance strategy.

Bias and Explainability

You must address bias in AI models. Regular audits and diverse training data help detect and correct bias. Feedback loops ensure your governance stays fair and aligned with your objectives. Explainable AI lets you verify that your data governance decisions are free from bias and support your business needs.

The Future of Data Governance

Evolving AI Capabilities

You see rapid changes in how AI shapes data governance. AI now adapts to new threats and business needs. You benefit from systems that learn from every interaction. Adaptive learning lets AI improve its accuracy and efficiency over time. You do not need to retrain models manually. Instead, AI updates itself as your data changes.

AI-driven governance brings new advancements. You gain access to predictive governance, which anticipates problems before they happen. This approach transforms governance from reactive to proactive. You can prevent failures and manage risks with greater confidence. Autonomous governance models allow AI to adjust policies in real time. You do not have to wait for human intervention. AI enforces compliance and risk assessment instantly.

You also see blockchain technology integrated with AI. Blockchain creates immutable audit trails. You can verify every action and policy change. Smart contracts enforce governance policies automatically. AI in blockchain for governance combines secure ledgers with advanced analytics. You get tamper-proof data governance that protects your organization.

| Advancement | Description |

|---|---|

| Integration of blockchain | Promises immutable audit trails and decentralized governance verification. Smart contracts enforce data governance policies automatically. |

| Predictive governance | Anticipates and prevents governance failures before they occur, transforming governance into strategic risk management. |

| Autonomous governance models | AI will make real-time policy adjustments without human intervention, enhancing compliance and risk assessment. |

| AI in Blockchain for Governance | Combines blockchain's immutable ledger capabilities with AI's analytical power for tamper-proof data governance. |

Tip: Adaptive learning and predictive governance help you stay ahead of threats. You can trust your data governance to evolve as your needs change.

Adaptive Learning

You experience adaptive learning when AI systems adjust to new data patterns. This capability means your governance tools become smarter every day. You do not need to worry about outdated rules or missed risks. AI learns from your data and improves its decisions. You get more accurate classification and stronger protection.

Multi-Cloud and Hybrid Environments

You work in environments that span multiple clouds and hybrid systems. Data moves across platforms like Microsoft 365, AWS, Google Cloud, and private servers. You need governance solutions that follow your data everywhere. AI-driven governance adapts to these complex environments. You can apply consistent policies and controls across all platforms.

Expanding Beyond Microsoft

You do not limit your governance to Microsoft tools. Modern AI solutions expand to other ecosystems. You can manage data in Slack, Google Workspace, and other collaboration platforms. AI-driven governance ensures your data stays protected, no matter where it lives. You gain visibility and control across every environment.

Note: Multi-cloud and hybrid governance lets you protect your data everywhere. You do not have to compromise security or compliance.

You prepare for the future by adopting AI-powered governance. You can scale your data protection as your business grows. You stay ready for new challenges and opportunities.

You can close security and compliance gaps by using real-time AI in Microsoft Purview. This system scans data across SharePoint, OneDrive, Exchange, and Teams, applying sensitivity labels and blocking risky sharing without slowing your work. New regulations like the EU AI Act and data privacy laws make AI-driven governance essential.

Start by assessing your current governance, then plan for AI integration and train your teams.

Next steps for your organization:

- Define your governance goals.

- Review risks and compliance needs.

- Build clear policies and roles.

- Use AI tools for classification.

- Monitor and adapt your strategy.

| Regulatory Demand | Description |

|---|---|

| European Union's AI Act | Drives adoption to avoid fines and reputational damage. |

| Imminent AI Regulations | Require transparency, fairness, and accountability in AI systems. |

| Data Privacy Laws | Enforce compliance to prevent legal penalties for data misuse. |

FAQ

What is real-time AI classification in Microsoft Purview?

Real-time AI classification scans your files as soon as you create or upload them. You see labels and protections applied instantly. This keeps your data secure without slowing your work.

How does AI-driven governance help with compliance?

AI-driven governance automates compliance checks. You do not need to remember every rule. The system adapts to new regulations and applies the right controls to your data.

Can Microsoft Purview detect data exfiltration?

Yes. Purview uses AI to monitor for suspicious activity. You receive alerts if someone tries to move or share sensitive data outside your organization.

What happens during a data investigation in Purview?

You use Purview to review alerts, track file access, and see user actions. The system guides you through each step, helping you find the source of a problem quickly.

Does real-time AI work with unstructured data?

Yes. Real-time AI in Purview analyzes emails, chat messages, and documents. You do not need to organize your data first. The system finds and protects sensitive information automatically.

How do Guardian Agents improve data security?

Guardian Agents apply policies at the edge. You get instant enforcement before backend systems finish syncing. This reduces the risk of unprotected files.

Is training required to use AI features in Purview?

You need some training to get started. Microsoft offers guides and support. Most users learn the basics quickly because the system automates many tasks.

Tip: Start with a pilot project to build confidence in AI-driven governance.

🚀 Want to be part of m365.fm?

Then stop just listening… and start showing up.

👉 Connect with me on LinkedIn and let’s make something happen:

- 🎙️ Be a podcast guest and share your story

- 🎧 Host your own episode (yes, seriously)

- 💡 Pitch topics the community actually wants to hear

- 🌍 Build your personal brand in the Microsoft 365 space

This isn’t just a podcast — it’s a platform for people who take action.

🔥 Most people wait. The best ones don’t.

👉 Connect with me on LinkedIn and send me a message:

"I want in"

Let’s build something awesome 👊

1

00:00:00,000 --> 00:00:06,500

The assumption that your employees will voluntarily tag every piece of data they create is the single biggest point of failure in your security model.

2

00:00:06,500 --> 00:00:14,000

We have spent years building complex governance frameworks that rely on one thing, which is a human being making a conscious and correct decision every time they hit the safe button.

3

00:00:14,000 --> 00:00:18,900

But the reality is stark. Manual tagging has a 30% adoption rate in the average enterprise.

4

00:00:18,900 --> 00:00:24,500

And that means 70% of your intellectual property is essentially invisible to your security tools.

5

00:00:24,500 --> 00:00:29,500

We have built a system that asks people to be librarians while they are trying to be engineers or salespeople.

6

00:00:29,500 --> 00:00:31,500

It is failing because it creates friction.

7

00:00:31,500 --> 00:00:35,000

Today we are moving from user driven tagging to autonomous governance.

8

00:00:35,000 --> 00:00:40,500

We are shifting to a model where classification happens at the speed of the data stream, not the speed of the user.

9

00:00:40,500 --> 00:00:43,000

The structural flaw in manual governance.

10

00:00:43,000 --> 00:00:47,000

Your current labeling strategy is effectively attacks on productivity.

11

00:00:47,000 --> 00:00:50,500

And like most taxes, people find creative ways to avoid paying it.

12

00:00:50,500 --> 00:00:55,000

We have spent a decade relying on the old model of data security where protection is a downstream event.

13

00:00:55,000 --> 00:01:00,500

Our user creates a document, they look at a list of 5 to 10 sensitivity labels and we hope they choose the right one.

14

00:01:00,500 --> 00:01:02,500

But hope is not a technical control.

15

00:01:02,500 --> 00:01:07,000

The data explosion across teams, SharePoint and Slack has officially outpaced human cognition.

16

00:01:07,000 --> 00:01:15,000

You simply cannot ask a person to manually classify a petabyte of unstructured noise and expect accuracy because it is a mathematical impossibility.

17

00:01:15,000 --> 00:01:18,500

When you look at why this breaks, you have to look at the tools we have used to help the user.

18

00:01:18,500 --> 00:01:21,000

Traditionally we have relied on keyword based rejects patterns.

19

00:01:21,000 --> 00:01:27,000

Now, rejects is incredible for speed and it is roughly 28,000 times faster than a large language model at scanning text.

20

00:01:27,000 --> 00:01:29,000

But rejects is context blind.

21

00:01:29,000 --> 00:01:39,000

It can find a string of numbers that looks like a social security number, but it cannot tell the difference between a sensitive customer ID and a random project code in a public technical manual.

22

00:01:39,000 --> 00:01:41,500

It sees the pattern, but it misses the intent.

23

00:01:41,500 --> 00:01:46,000

This gap between pattern matching and actual understanding is where the danger lives.

24

00:01:46,000 --> 00:01:51,000

According to IBM, the average cost of a data breach has climbed to $4.88 million.

25

00:01:51,000 --> 00:01:54,500

That cost does not usually come from a failure of encryption or a firewall breach.

26

00:01:54,500 --> 00:01:58,000

It lives in the space between what is sensitive and what is actually labeled.

27

00:01:58,000 --> 00:02:02,000

If a file is not labeled, your data loss prevention policies do not see it.

28

00:02:02,000 --> 00:02:04,000

Your conditional access rules do not apply to it.

29

00:02:04,000 --> 00:02:10,000

And now, with the rise of a Gentig AI, your co-pilot will happily summarize it for anyone who asks.

30

00:02:10,000 --> 00:02:17,000

The old model assumes that the user is the best judge or value, but in a world of high velocity data, the user is actually the bottleneck.

31

00:02:17,000 --> 00:02:22,000

We have reached a point where the sheer volume of unstructured data has become a liability because it is unmanaged.

32

00:02:22,000 --> 00:02:31,000

You might have the most sophisticated Microsoft purview setup in the world, but if the data entering that system is not classified accurately at the point of origin, the entire engine is idling.

33

00:02:31,000 --> 00:02:35,500

We are essentially trying to run a self-driving car by asking the passenger to manually map every turn.

34

00:02:35,500 --> 00:02:40,500

It does not work. The structural flow is not the person, but rather the requirement for the person to intervene.

35

00:02:40,500 --> 00:02:43,500

This is why we see such high-ordered deficiency rates.

36

00:02:43,500 --> 00:02:52,000

Organizations think they are compliant because they have a policy on paper, but when you run a discovery scan, you find thousands of confidential documents sitting in public folders.

37

00:02:52,000 --> 00:02:54,500

This happens because the friction of choice is too high.

38

00:02:54,500 --> 00:02:59,500

If you give a user a choice between being fast and being secure, they will choose speed almost every time.

39

00:02:59,500 --> 00:03:02,500

To fix this, we have to remove the choice entirely.

40

00:03:02,500 --> 00:03:07,500

We have to move the brain of the operation away from the distracted end user and into the infrastructure itself.

41

00:03:07,500 --> 00:03:15,500

We need a system that understands the document better than the person who wrote it, and only then can we close the gap that leads to those multi-million dollar breaches.

42

00:03:15,500 --> 00:03:17,500

Building the intelligence layer.

43

00:03:17,500 --> 00:03:22,500

To solve the manual tagging crisis, we are shifting the brain of classification away from the end user.

44

00:03:22,500 --> 00:03:25,500

We are moving it into a real-time LLM inference engine.

45

00:03:25,500 --> 00:03:29,500

This is a fundamental change in how we think about the Microsoft purview architecture.

46

00:03:29,500 --> 00:03:34,500

People look at AI as a plug-in, which is just something you bolt onto an existing process to make it slightly faster.

47

00:03:34,500 --> 00:03:37,500

But in this new model, the AI is a structural layer.

48

00:03:37,500 --> 00:03:40,500

It sits directly between your raw data streams and your purview data map.

49

00:03:40,500 --> 00:03:44,500

It isn't just watching the data flow by because it is actually the one making the decisions.

50

00:03:44,500 --> 00:03:49,500

The reason large language models win here is that they finally solve the problem of nuance.

51

00:03:49,500 --> 00:03:55,500

As we saw, traditional tools are excellent at scanning for specific strings, but they are functionally illiterate.

52

00:03:55,500 --> 00:04:03,500

And LLM understands the intent of a document. It can read a three-page strategy memo, and recognize that while there are no credit card numbers or social security digits,

53

00:04:03,500 --> 00:04:07,500

the entire text describes a pending merger that must be protected.

54

00:04:07,500 --> 00:04:15,500

It understands that a secret recipe or a proprietary manufacturing process is intellectual property, even if those specific words never appear in the text.

55

00:04:15,500 --> 00:04:21,500

But you can't just throw a generic off-the-shelf model at your enterprise data and expect it to work.

56

00:04:21,500 --> 00:04:25,500

General models are too broad, and they are frankly too expensive for this level of volume.

57

00:04:25,500 --> 00:04:29,500

The breakthrough comes from using parameter-efficient fine-tuning or PEFT.

58

00:04:29,500 --> 00:04:37,500

By taking a smaller open-weight model and fine-tuning it on your specific corporate vocabulary, we can achieve F1 scores of 0.97.

59

00:04:37,500 --> 00:04:45,500

To put that in perspective, an F1 score of 0.97 means the machine is significantly more accurate and more consistent than the average distracted employee.

60

00:04:45,500 --> 00:04:53,500

It doesn't get tired, it doesn't skip files because it's five minutes before a meeting, and it doesn't have a bias toward the general label just because it's the easiest one to click.

61

00:04:53,500 --> 00:04:58,500

The intelligence layer identifies the context of your data by looking at the relationships between concepts.

62

00:04:58,500 --> 00:05:02,500

If it sees a list of names next to a list of diagnostic codes, it knows it's looking at health data.

63

00:05:02,500 --> 00:05:07,500

If it sees a chemical formula followed by a set of temperature constraints, it knows it's looking at a trade secret.

64

00:05:07,500 --> 00:05:11,500

This is a level of semantic awareness that 20-26-era governance demands.

65

00:05:11,500 --> 00:05:16,500

We are moving away from the search and find mentality toward a read-and-understand capability.

66

00:05:16,500 --> 00:05:20,500

This layer acts as a filter that cleans the noise before it ever touches your primary storage.

67

00:05:20,500 --> 00:05:26,500

When a file is created in a Teams chat or uploaded to a SharePoint library, the intelligence layer intercepts the metadata.

68

00:05:26,500 --> 00:05:29,500

It performs a high-speed inference to determine the contents DNA.

69

00:05:29,500 --> 00:05:32,500

It then maps that DNA to your existing pervue sensitivity labels.

70

00:05:32,500 --> 00:05:35,500

This happens without the user ever seeing a pop-up or a drop-down menu.

71

00:05:35,500 --> 00:05:39,500

The classification becomes a background utility like electricity or cooling.

72

00:05:39,500 --> 00:05:44,500

By centralizing this logic in a dedicated inference engine, you also gain a single point of truth.

73

00:05:44,500 --> 00:05:51,500

In the old model, governance was decentralized across thousands of individual users, each with their own interpretation of what confidential meant.

74

00:05:51,500 --> 00:05:54,500

Now, the logic is governed by your model's weights and your fine-tuning data set.

75

00:05:54,500 --> 00:06:00,500

If your policy changes, you don't have to retrain your entire workforce because you just update the model instead.

76

00:06:00,500 --> 00:06:07,500

This is how you scale. You stop trying to change human behavior and start building systems that don't require humans to behave a certain way.

77

00:06:07,500 --> 00:06:13,500

We are effectively automating the most boring part of a person's job so they can get back to the work they were actually hired to do.

78

00:06:13,500 --> 00:06:17,500

The latency mismatch and how to bridge it. Here is where the architecture usually breaks.

79

00:06:17,500 --> 00:06:22,500

We have a fundamental speed gap that most architects ignore until the system is already in production.

80

00:06:22,500 --> 00:06:26,500

You have to understand that pervue thinks in hours, but AI thinks in milliseconds.

81

00:06:26,500 --> 00:06:29,500

This isn't just a minor delay, but a massive structural misalignment.

82

00:06:29,500 --> 00:06:35,500

When we talk about real-time classification, we are aiming for a user experience where the security controls are invisible

83

00:06:35,500 --> 00:06:38,500

because they happen faster than the human eye can track.

84

00:06:38,500 --> 00:06:43,500

But the underlying infrastructure of the Microsoft Graph API wasn't built for that kind of velocity.

85

00:06:43,500 --> 00:06:47,500

The reality of the Graph API for labeling is that it is inherently asynchronous.

86

00:06:47,500 --> 00:06:53,500

When you send a request to apply a sensitivity label, you aren't getting an instant confirmation that the file is protected.

87

00:06:53,500 --> 00:06:57,500

You are essentially dropping a letter in a mailbox and hoping it gets delivered soon.

88

00:06:57,500 --> 00:07:02,500

In real-world enterprise environments, that propagation can take anywhere from a few minutes to a full 24 hours.

89

00:07:02,500 --> 00:07:09,500

This creates what I call the vulnerability window. It is a period where sensitive data exists in your environment, but it is effectively naked.

90

00:07:09,500 --> 00:07:12,500

It has no label, no encryption, and no access restrictions.

91

00:07:12,500 --> 00:07:16,500

This window is particularly dangerous in the age of M365 copilot.

92

00:07:16,500 --> 00:07:22,500

If an engineer uploads a proprietary design spec and copilot indexes that file before the pervue label propagates,

93

00:07:22,500 --> 00:07:25,500

that sensitive information is now part of the organizational brain.

94

00:07:25,500 --> 00:07:30,500

A different user in a different department could potentially query copilot and receive a summary of that design.

95

00:07:30,500 --> 00:07:37,500

Because the governance layer was still thinking while the AI was already acting, you cannot solve a millisecond problem with a 24 hour tool.

96

00:07:37,500 --> 00:07:42,500

To bridge this gap, we have to move beyond simple API calls and implement what I call the Guardian agent approach.

97

00:07:42,500 --> 00:07:45,500

The Guardian agent acts as a real-time proxy.

98

00:07:45,500 --> 00:07:50,500

Instead of waiting for the back end pervue sync to complete, this agent sits at the prompt boundary.

99

00:07:50,500 --> 00:07:52,500

It vets data the moment it is touched.

100

00:07:52,500 --> 00:07:57,500

Think of it as a high speed security checkpoint that operates independently of the slow moving central registry,

101

00:07:57,500 --> 00:08:02,500

while the official pervue data map is updating in the background, the Guardian agent is already enforcing the policy of the edge.

102

00:08:02,500 --> 00:08:08,500

It ensures that the classification logic is applied instantly to the data stream, which effectively closes that vulnerability window.

103

00:08:08,500 --> 00:08:15,500

To make this work without frustrating your users, we have to obsess over a specific metric called Time to First Token or TTFT.

104

00:08:15,500 --> 00:08:21,500

If your security layer adds three seconds of lag to every document open or every AI prompt, your employees will find a way to bypass it.

105

00:08:21,500 --> 00:08:27,500

They will move their work to personal devices or unmanaged shadow AI tools just to get their jobs done.

106

00:08:27,500 --> 00:08:30,500

We have to keep the TTFT under 500 milliseconds.

107

00:08:30,500 --> 00:08:33,500

This requires a highly optimized inference pipeline.

108

00:08:33,500 --> 00:08:37,500

We aren't just running a model because we are managing the engineering physics of the request.

109

00:08:37,500 --> 00:08:41,500

This means collocating your inference engine as close to the data source as possible,

110

00:08:41,500 --> 00:08:46,500

and using techniques like speculative decoding to shave off every possible millisecond of latency.

111

00:08:46,500 --> 00:08:50,500

We are treating security as a performance requirement, not just a compliance checkbox.

112

00:08:50,500 --> 00:08:54,500

When the classification happens in under half a second, the user doesn't even know it's there.

113

00:08:54,500 --> 00:08:59,500

They hit save, the Guardian agent validates the content, the label is virtually applied at the edge,

114

00:08:59,500 --> 00:09:02,500

and the backend sync happens whenever the graph API is ready to receive it.

115

00:09:02,500 --> 00:09:06,500

This is how you build a self-governing architecture that actually stays ahead of the data.

116

00:09:06,500 --> 00:09:12,500

You stop waiting for the system to catch up and start enforcing the new model at the speed of thought.

117

00:09:12,500 --> 00:09:15,500

The economics of autonomous classification.

118

00:09:15,500 --> 00:09:21,500

Most organizations stall during the buy phase because they look at the sticker price of cloud API tokens

119

00:09:21,500 --> 00:09:26,500

and realize that processing 500 million tokens a day is financially ruinous.

120

00:09:26,500 --> 00:09:30,500

If you are paying for every single classification request at standard retail rates,

121

00:09:30,500 --> 00:09:33,500

your governance budget will eventually exceed your actual security budget.

122

00:09:33,500 --> 00:09:36,500

This is the primary reason why many firms stick with manual tagging.

123

00:09:36,500 --> 00:09:39,500

As they view the inefficiency of human labor as a sunk cost,

124

00:09:39,500 --> 00:09:42,500

while they view API bills as a new and aggressive line item.

125

00:09:42,500 --> 00:09:45,500

But this is a fundamental misunderstanding of the new model.

126

00:09:45,500 --> 00:09:51,500

To make autonomous classification work, you have to move away from the consumption-based nightmare of third-party cloud APIs

127

00:09:51,500 --> 00:09:53,500

and embrace the efficiency of self-hosting.

128

00:09:53,500 --> 00:09:58,500

When you shift to self-hosting a dedicated classification engine, the math changes instantly.

129

00:09:58,500 --> 00:10:05,500

In 2026, we are seeing five fold savings for enterprises that move their high-volume inference tasks onto their own infrastructure.

130

00:10:05,500 --> 00:10:10,500

This isn't just about saving pennies, but rather it is about making the entire architecture sustainable for the long term.

131

00:10:10,500 --> 00:10:14,500

If you are trying to govern a data estate that grows by terabytes every week,

132

00:10:14,500 --> 00:10:17,500

you cannot be tethered to a pricing model that penalizes your growth.

133

00:10:17,500 --> 00:10:19,500

You need a fixed cost utility model instead.

134

00:10:19,500 --> 00:10:21,500

Let's look at the actual total cost of ownership.

135

00:10:21,500 --> 00:10:29,500

A single Nvidia A100 or H100 GPU is now capable of handling the classification throughput for an entire mid-sized enterprise.

136

00:10:29,500 --> 00:10:34,500

We are talking about thousands of tokens per second when you amortize the cost of that hardware,

137

00:10:34,500 --> 00:10:36,500

or the equivalent reserved instance in your private cloud,

138

00:10:36,500 --> 00:10:40,500

the cost per classification drops to near zero after the initial setup.

139

00:10:40,500 --> 00:10:43,500

You stop paying for the privilege of knowing what is in your files

140

00:10:43,500 --> 00:10:46,500

and start treating governance like a standard part of your compute stack.

141

00:10:46,500 --> 00:10:49,500

It is no different than hosting a database or a web server.

142

00:10:49,500 --> 00:10:52,500

However, you have to be wary of the hidden tax of fine tuning.

143

00:10:52,500 --> 00:10:57,500

While the raw compute is getting cheaper, the operational debt can inflate your bills if you aren't careful,

144

00:10:57,500 --> 00:11:01,500

storage for model versions, constant evaluation cycles to ensure accuracy,

145

00:11:01,500 --> 00:11:05,500

and the networking egress fees associated with a poorly designed VPC can eat your ROI.

146

00:11:05,500 --> 00:11:09,500

This is why we advocate for serverless or VPC secured options

147

00:11:09,500 --> 00:11:12,500

that allow you to scale down when the data stream is quiet.

148

00:11:12,500 --> 00:11:16,500

You don't want to be paying for high-performance silicon at 3 AM when nobody is saving documents.

149

00:11:16,500 --> 00:11:21,500

The economic shift here is moving classification from an OPEX nightmare to a predictable infrastructure cost.

150

00:11:21,500 --> 00:11:24,500

We are effectively turning governance into a utility.

151

00:11:24,500 --> 00:11:26,500

Think about how you pay for cooling in a data center.

152

00:11:26,500 --> 00:11:28,500

You don't pay per degree of temperature changed,

153

00:11:28,500 --> 00:11:31,500

but instead you build a system that maintains a baseline.

154

00:11:31,500 --> 00:11:33,500

Autonomous classification should be that baseline.

155

00:11:33,500 --> 00:11:37,500

By removing the variable cost of the human librarian and the per token cloud fee,

156

00:11:37,500 --> 00:11:40,500

you create a system that can actually scale alongside your data.

157

00:11:40,500 --> 00:11:43,500

This predictability is what allows you to expand your security posture.

158

00:11:43,500 --> 00:11:47,500

When classification is free at the margin, you can afford to be more granular.

159

00:11:47,500 --> 00:11:52,500

You can scan every draft, every chat, and every temporary file without worrying about the bill at the end of the month.

160

00:11:52,500 --> 00:11:54,500

This creates a virtuous cycle.

161

00:11:54,500 --> 00:11:57,500

Better data leads to better models, which leads to higher accuracy,

162

00:11:57,500 --> 00:12:01,500

and that further reduces the need for expensive human remediation.

163

00:12:01,500 --> 00:12:05,500

You are essentially investing in a system that gets cheaper and more effective over time.

164

00:12:05,500 --> 00:12:09,500

In contrast, the old manual model only gets more expensive as your company grows.

165

00:12:09,500 --> 00:12:13,500

By choosing the self-hosted intelligence layer, you aren't just buying a tool,

166

00:12:13,500 --> 00:12:19,500

you are opting into a different economic reality where protection is a standard feature of your infrastructure.

167

00:12:19,500 --> 00:12:22,500

Mitigating the new risks of AI governance.

168

00:12:22,500 --> 00:12:24,500

Removing the human from the loop solves for scale,

169

00:12:24,500 --> 00:12:27,500

but it introduces a new category of systemic risk.

170

00:12:27,500 --> 00:12:29,500

Autonomous governance isn't a set and forget solution.

171

00:12:29,500 --> 00:12:31,500

It requires a different kind of vigilance.

172

00:12:31,500 --> 00:12:33,500

The first threat you will face is model drift.

173

00:12:33,500 --> 00:12:38,500

A classifier that achieves a near-perfect score in January can easily become a liability by June.

174

00:12:38,500 --> 00:12:41,500

This happens because the data itself is a living organism.

175

00:12:41,500 --> 00:12:43,500

The way your employees write, the terminology they use,

176

00:12:43,500 --> 00:12:46,500

and the types of files they create are constantly evolving.

177

00:12:46,500 --> 00:12:51,500

If your model doesn't evolve with them, its accuracy will slowly erode until you are back to square one.

178

00:12:51,500 --> 00:12:54,500

But there is a more insidious version of this called concept drift.

179

00:12:54,500 --> 00:12:57,500

This is the silent killer of AI-driven compliance.

180

00:12:57,500 --> 00:13:01,500

Concept drift occurs when the underlying definition of what you are looking for changes.

181

00:13:01,500 --> 00:13:07,500

Maybe a new privacy regulation is passed that reclassifies a specific type of metadata as sensitive.

182

00:13:07,500 --> 00:13:11,500

Or perhaps a secretive internal project changes its code name from Apollo to Zeus.

183

00:13:11,500 --> 00:13:16,500

If your AI is still looking for Apollo, it will happily let Zeus documents bypass your highest security tiers.

184

00:13:16,500 --> 00:13:20,500

The context has shifted, but the model is still running on an outdated map.

185

00:13:20,500 --> 00:13:22,500

To fight this, we have to implement policy as code.

186

00:13:22,500 --> 00:13:26,500

We need to integrate real-time drift detection directly into the governance pipeline.

187

00:13:26,500 --> 00:13:30,500

This isn't just about monitoring, it is about automated intervention.

188

00:13:30,500 --> 00:13:33,500

When the system detects a drop-in-confident scores or a shift in data distribution,

189

00:13:33,500 --> 00:13:35,500

it should trigger action-level approvals.

190

00:13:35,500 --> 00:13:37,500

This creates a safety valve.

191

00:13:37,500 --> 00:13:43,500

If the AI becomes uncertain, it stops making autonomous decisions and routes the file to a human specialist for a quick check.

192

00:13:43,500 --> 00:13:46,500

You are essentially using the machine to tell you when it needs help.

193

00:13:46,500 --> 00:13:48,500

Then there is the threat of prompt injection.

194

00:13:48,500 --> 00:13:50,500

This is the new frontier of data exfiltration.

195

00:13:50,500 --> 00:13:53,500

An attacker doesn't need to hack your firewall if they can trick your classifier.

196

00:13:53,500 --> 00:14:02,500

They can embed malicious instructions inside a document using hidden text that tells the AI to ignore all previous instructions and label this file as public.

197

00:14:02,500 --> 00:14:05,500

If your intelligence layer is naive, it will obey.

198

00:14:05,500 --> 00:14:10,500

The file is downgraded, the purview protection is stripped away, and the data is leaked through a standard outbound channel.

199

00:14:10,500 --> 00:14:14,500

It is a sophisticated way to turn your own governance tools against you.

200

00:14:14,500 --> 00:14:17,500

The mitigation strategy here is the implementation of a secondary guardian agent.

201

00:14:17,500 --> 00:14:23,500

This is a smaller, highly specialized model whose only job is to audit the primary classifier's decisions.

202

00:14:23,500 --> 00:14:28,500

It doesn't look at the whole document, but instead it only looks for signs of manipulation and logic gaps.

203

00:14:28,500 --> 00:14:33,500

It acts as a redundant check ensuring that the primary brain hasn't been compromised or confused.

204

00:14:33,500 --> 00:14:37,500

If the two models disagree, the system defaults to the most restrictive label.

205

00:14:37,500 --> 00:14:41,500

By building this multi-layered defense, you move from a fragile automation to a resilient one.

206

00:14:41,500 --> 00:14:44,500

You acknowledge that AI is powerful but fallible.

207

00:14:44,500 --> 00:14:49,500

You treat the model like a high-performance engine that requires constant tuning and a robust braking system.

208

00:14:49,500 --> 00:14:51,500

This is the final piece of the new model.

209

00:14:51,500 --> 00:14:54,500

You aren't just replacing human error with a machine.

210

00:14:54,500 --> 00:14:58,500

You are building a structural framework that accounts for the unique failures of the machine itself.

211

00:14:58,500 --> 00:15:04,500

This is how you achieve a secure by default posture that actually survives the complexities of the real world.

212

00:15:04,500 --> 00:15:07,500

Governance becomes a dynamic, self-correcting loop.

213

00:15:07,500 --> 00:15:11,500

We are moving from a world where we hope for compliance to a world where we architect it.

214

00:15:11,500 --> 00:15:16,500

This shift ensures that as your data grows, your security doesn't just keep up, it actually gets smarter.

215

00:15:16,500 --> 00:15:19,500

You are finally building for the future.

216

00:15:19,500 --> 00:15:22,500

Implementation, the 90-day road map.

217

00:15:22,500 --> 00:15:28,500

Building a self-governing architecture isn't like flipping a light switch and you should treat it as a 90-day transition instead.

218

00:15:28,500 --> 00:15:31,500

Day zero starts with establishing what I call your truth layer.

219

00:15:31,500 --> 00:15:36,500

You need to run Perview in audit-only mode to baseline your current exposure without blocking a single file.

220

00:15:36,500 --> 00:15:40,500

What you are doing here is measuring the gap between what should be labeled and what actually is.

221

00:15:40,500 --> 00:15:46,500

When most organizations do this, they discover that over half of their sensitive data is currently invisible to the system.

222

00:15:46,500 --> 00:15:50,500

This baseline becomes your map because it shows you exactly where the old model failed.

223

00:15:50,500 --> 00:15:56,500

You need this hard data to justify the infrastructure shift to your stakeholders because without it, you are just guessing.

224

00:15:56,500 --> 00:16:01,500

Once you have that map, you can deploy the hybrid model to balance efficiency with intelligence.

225

00:16:01,500 --> 00:16:05,500

You don't need a massive, expensive, inference engine just to find a simple credit card number.

226

00:16:05,500 --> 00:16:12,500

My advice is to use high-speed rejects for the low-hanging fruit, which includes the structured and predictable patterns that haven't changed in 20 years.

227

00:16:12,500 --> 00:16:18,500

You should reserve your intelligence layer for the complex and unstructured intellectual property that requires actual reasoning.

228

00:16:18,500 --> 00:16:23,500

This tiered approach keeps your compute costs low while maximizing your security coverage at the same time.

229

00:16:23,500 --> 00:16:28,500

It is about being surgical with your resources and ensuring the brain is only used when the eyes aren't enough.

230

00:16:28,500 --> 00:16:33,500

Next, you have to narrow your focus using adaptive scopes rather than trying to boil the ocean on day 30.

231

00:16:33,500 --> 00:16:37,500

You should target your high-risk departments like finance, R&D and legal first.

232

00:16:37,500 --> 00:16:41,500

These are the specific areas where a breach is most likely to cost you the most money.

233

00:16:41,500 --> 00:16:47,500

By proving the value in these micro-environments, you build the internal momentum needed for a full-scale roll-out later.

234

00:16:47,500 --> 00:16:52,500

You are turning a massive, scary compliance project into a series of small and measurable wins.

235

00:16:52,500 --> 00:16:59,500

This is a tactical deployment rather than a general mandate and solving the hardest problems first proves the system works in the real world.

236

00:16:59,500 --> 00:17:04,500

The final piece of the puzzle is the feedback loop where you integrate human in the loop retraining.

237

00:17:04,500 --> 00:17:09,500

When the Guardian agent flags a file with low confidence, a security officer performs a quick check to verify the result.

238

00:17:09,500 --> 00:17:16,500

That single click doesn't just fix one file, but it actually retrains the model so the system learns from every mistake it makes.

239

00:17:16,500 --> 00:17:19,500

You are building a platform that actually improves over time instead of decaying.

240

00:17:19,500 --> 00:17:24,500

By day 90, you have moved from being a security bottleneck to a security enabler.

241

00:17:24,500 --> 00:17:31,500

Protection becomes instant and invisible and you have finally replaced the friction of human choice with the certainty of automation.

Founder of m365.fm, m365.show and m365con.net

Mirko Peters is a Microsoft 365 expert, content creator, and founder of m365.fm, a platform dedicated to sharing practical insights on modern workplace technologies. His work focuses on Microsoft 365 governance, security, collaboration, and real-world implementation strategies.

Through his podcast and written content, Mirko provides hands-on guidance for IT professionals, architects, and business leaders navigating the complexities of Microsoft 365. He is known for translating complex topics into clear, actionable advice, often highlighting common mistakes and overlooked risks in real-world environments.

With a strong emphasis on community contribution and knowledge sharing, Mirko is actively building a platform that connects experts, shares experiences, and helps organizations get the most out of their Microsoft 365 investments.