Detecting Data Leaks Internally: Essential Strategies for Microsoft Environments

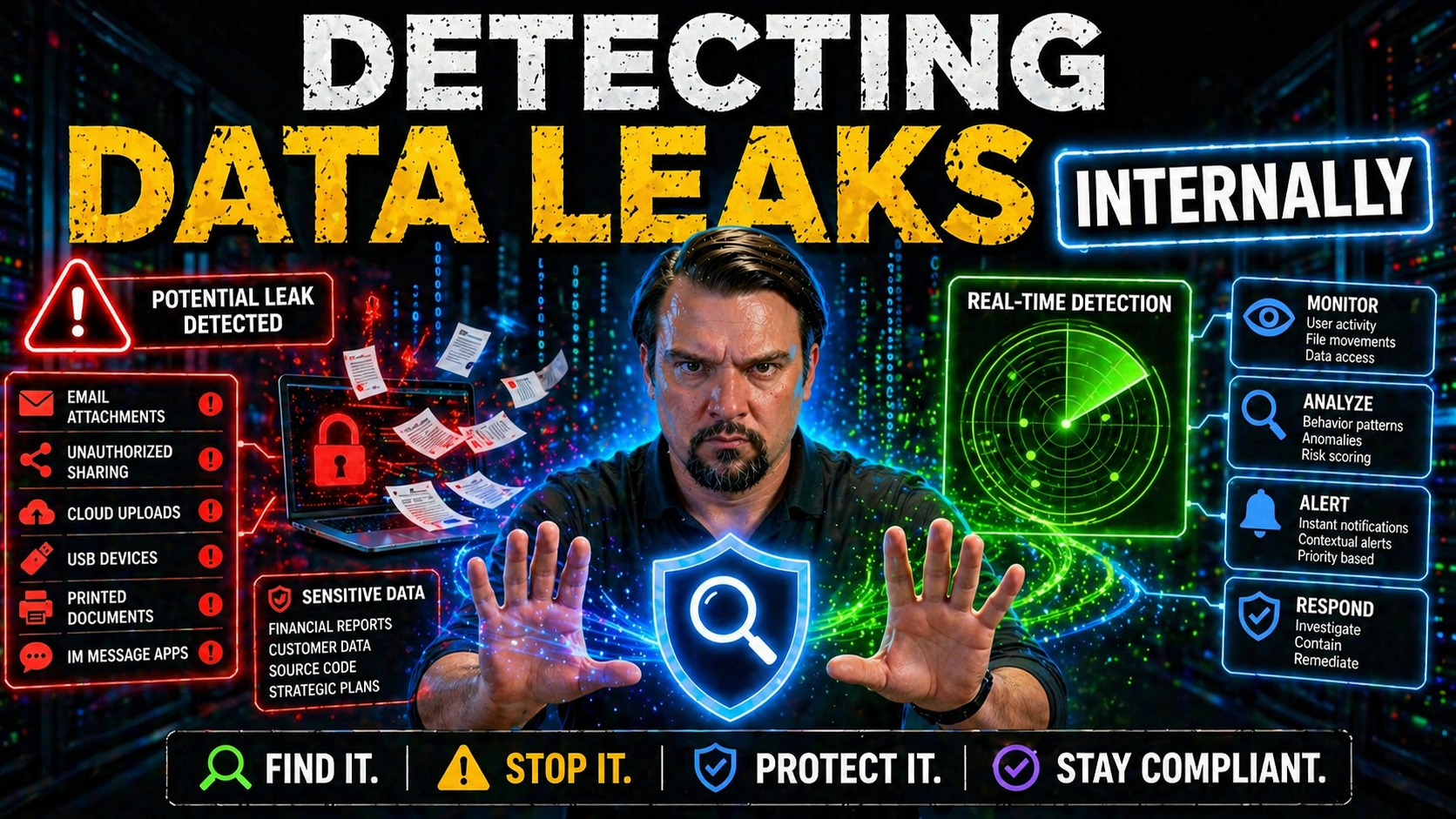

Detecting internal data leaks isn’t just about finding the obvious holes—it’s about understanding all the ways sensitive information can slip out quietly, often without anyone noticing. In Microsoft environments, from Azure to SharePoint to Power Platform, data can move in surprising and sometimes risky ways. This guide is your straight-talking roadmap to tackle those risks head-on.

You’ll learn to spot internal exposure before it turns into a full-blown breach, use the right Microsoft tools to monitor and lock down risky flows, and set up both technical and behavioral defenses. We’ll cover practical steps for prepping your testing, doing hands-on inspections, responding to leaks, and staying on top with long-term strategies. Whether you’re working with Microsoft 365, Teams, or any cloud service in the ecosystem, we’ll show you how to recognize red flags and build smarter defenses—without making your business grind to a halt.

Data Leak Leakage Introduction: Understanding Internal Data Exposure and Risks

When people talk about data leaks, the first thing that usually comes to mind is hackers in hoodies or some external bad actor breaching your walls. But in real life, most leaks start from the inside—unnoticed mistakes, open permissions, or employees sharing more than they should. In Microsoft environments, the lines get even blurrier with features designed for collaboration and constant file syncing. That’s where the risk of “data leak leakage” gets real.

Internal data leaks aren’t just another version of a data breach. They’re not always about a deliberate attack; sometimes, it’s someone copying sensitive files to an open SharePoint site or misconfigured Teams channel. The data gets exposed, but it may not leave your network—at first. What makes these leaks so dangerous is that they’re often invisible until the damage is done, especially if users don’t know that what they’re sharing is sensitive or at risk.

Even a casual slip—like attaching the wrong spreadsheet to an all-hands email—can set off compliance alarms and trigger regulatory headaches. Early detection is your best defense. Spotting these leaks quickly protects not just compliance, but your company’s reputation and customer trust. In Microsoft 365 and Azure, strong access governance and clear ownership make all the difference (if you want a deep dive, check out this discussion on data access and governance).

Throughout this guide, we’ll dig into the mechanics of internal exposure, explain why leaks happen even in tightly managed systems, and offer Microsoft-focused solutions drawn from real-world cases. That way, you’re prepared for whatever comes your way, not just the textbook attacks.

Common Causes: Shadow IT, Misconfigured Cloud Storage, and Endpoint Vulnerabilities

- Shadow IT and Unapproved Apps. One of the biggest troublemakers is employees using apps or services that aren’t officially approved. If users connect their personal OneDrive, Google Drive, or even install unvetted Teams apps, sensitive files can slip through the cracks. Shadow IT opens your environment to rogue OAuth apps and external sharing that goes way beyond your organization’s intended boundaries. Microsoft 365 admins need to keep a close eye on what’s spinning up in their tenant—here’s a practical guide to rooting out shadow IT in Microsoft environments.

- Misconfigured Cloud Storage (Azure/OneDrive/SharePoint). Cloud storage buckets or folders that are left open or shareable with “anyone” are ripe for accidental leakage. Sometimes developers or power users set permissions that are too broad, or forget to lock down demo environments. Misconfigurations in Power Platform, OneDrive, or SharePoint can expose sensitive documents, scripts, or entire directories to the wrong users—or even to the public web.

- Endpoint Vulnerabilities and Device Risks. Laptops and mobile devices often hold cached data or download files for offline access. If those endpoints aren’t patched, or if endpoint protection is missing, malware or smart attackers can grab data directly—no need to attack the cloud at all. Weak security on endpoints can lead to the silent spread of sensitive files between users or the wrong domains.

- Power Platform and Teams Weaknesses. Rapid development with connectors and automation (think Power Automate flows) can accidentally link sensitive business data to public connectors or non-business services. Similarly, open Teams channels and file attachments might seem harmless—until a confidential report pops up in a group chat with broad membership. Want the details on Power Platform controls?Check out these Power Platform DLP guidelines.

- Neglected Configuration Files and Source Code. Leaving credentials, connection strings, or internal hostnames exposed in shared repos or public folders is a classic way to leak the keys to the kingdom. These exposures often go unnoticed, especially with automated code pushes or testing setups.

Sensitive Data at Risk: What Can Leak Internally?

- Personal Identifiable Information (PII). Employee or customer names, addresses, Social Security numbers—these are gold for fraud and identity theft and are common to find in HR files or shared spreadsheets.

- Credentials and Access Tokens. Passwords, API keys, and connection strings get saved all over the place. If they show up in Teams messages or unlocked files, attackers (or careless insiders) can do real damage fast.

- Intellectual Property and Business Documents. Product prototypes, source code, or secret project slides risk falling into the wrong internal hands, especially when stored in broadly accessible SharePoint libraries or email threads.

- Financial Records and Contracts. Budgets, forecasts, and sensitive client agreements are especially vulnerable in shared drives or when sent around for “quick review.”

- Unlabeled Sensitive Data. Even content that seems harmless—like random logs—can unintentionally leak confidential details if it contains internal hostnames, error messages, or support case information. Sensitivity labels in Microsoft 365 can help (explore governance best practices).

Preparation Before Testing: Securing Access and Defining Scope

Before you start any internal data leak testing, it’s critical to lay a solid foundation. First off, you need to secure the right permissions—don’t even open up a scan or audit unless you’ve got written approval and all the access you need to do it legally and safely. This isn’t just about red tape; it’s about avoiding accidental disruptions during your tests.

You’ll also want to define a clear scope. Document which systems, applications, and data types are included in the test, and which ones are strictly off-limits. Setting those rules upfront keeps your team focused and helps avoid scanning sensitive production data or stepping on toes in other departments. Think of it as building a safety net before you step out onto the wire.

It’s just as important to make sure your testing environment is isolated from production, or at least closely monitored. Accidental updates or test scripts can cause unintended side effects if you’re not careful. Turning on detailed audit logs—especially within Microsoft 365, Azure, and Teams—lets you trace what happens during your testing and helps prove you stayed within the rules. For a breakdown on compliance drift and audit logging, this podcast looks at hidden Microsoft 365 compliance gotchas.

Finally, coordinate with compliance officers, system owners, and key stakeholders before you kick off. Make sure everyone is aware, and knows how to reach you if something looks off. A well-prepped team, precise rules, and solid audit trails keep testing smooth—and keep the business running without nasty surprises.

Passive Recon: Observe Internal Data Flows in Emails and Messaging Platforms

Catching a data leak often starts by quietly watching how information flows inside your organization’s own walls. Instead of actively probing or scanning systems, passive reconnaissance means observing how data travels—especially through internal email, Teams chats, or cloud collaboration tools. This approach keeps operations undisturbed but surfaces signals that something unusual might be brewing under the radar.

Reviewing Exchange logs, Teams message histories, and Microsoft 365 audit records is a smart way to spot sudden bursts of file sharing, strange forwarding patterns, or the appearance of customer data in places it’s not supposed to be. Microsoft Purview’s audit tools provide tenant-wide user activity logs, revealing unexpected sharing events, mailbox access spikes, or attempts to move large files in or out of shared drives. Want to get granular with logs? This guide covers Purview audit techniques for catching problems before they snowball.

Pay special attention to usage spikes, files sent to group chats, or activity outside of business hours—all of which can hint at accidental (or deliberate) data exposure. For sensitive environments, upgrading from standard to premium audit tiers gives you longer log retention and richer forensic details, which really helps with insider threat investigations and compliance probes down the road.

Finally, integrating network monitoring or behavioral analytics can help fingerprint encrypted app usage, highlighting data movement even if you can’t read the actual content over channels like Slack, WhatsApp, or unauthorized SaaS. And if you’re building a strict Zero Trust model, this podcast discusses how to design cross-platform security policies to shrink exposure windows and reduce risk from the start.

DevTools Network Request Inspection: Capture and Safely Inspect Browser Data Flows

Some of the sneakiest internal data leaks happen right in the browser—often under everyone’s noses. Modern web-based Microsoft apps (like SharePoint, Outlook Web, Power Apps, or custom company portals) rely on APIs to fetch and save data behind the scenes. That means sensitive details like user data, document contents, and access tokens can get bundled into JSON payloads or query parameters every time someone clicks around.

This is where browser DevTools come in handy. With a few clicks, you can capture all the traffic flowing in and out of your browser, filter for API requests, and spot sensitive information riding along in responses or headers. It’s not about actively breaking anything—it’s about seeing what any regular user might see, but analyzing it with a security lens. This method is especially valuable for catching issues that don’t show up in backend logs: say, an API returning too much data when you only needed a summary, or query strings revealing internal IDs and secrets.

Pay attention to actions like document sharing, file downloading, or accessing admin features. These points often trigger requests that return big blobs of data, sometimes to less-privileged users by mistake. By following along with what users can do in the UI and pairing it up with what’s returned by backend APIs, you’ll quickly spot the disconnects that lead to leaks.

In the next section, we’ll break down exactly how to map user clicks to potential exposures and how to safely analyze and document anything suspicious—because sometimes, a few wrong lines in a web response can open the door wide to internal leakage.

Identifying Exposure: Quick Guide from UI Actions to Backend API Endpoints

- Map the User Action. Start by performing normal actions like searching for a file or viewing a profile inside your app. Note exactly what you’re doing and what features you’re touching.

- Capture Related Network Requests. With DevTools open, watch which API endpoints get called with each action. Save the full request and response data, especially for actions involving sensitive content.

- Analyze Returned Data for Overexposure. Check the response—even if you just asked for your own file, does the API return more than it should? Look for fields containing other users’ information, internal IDs, or confidential records accidentally included.

- Correlate with Permissions and Audit Logging. Does the returned information match the access rights assigned in Microsoft 365 or Azure AD? Enhanced audit trails can help spot when overexposed data is being accessed (see how advanced SharePoint auditing works here).

- Document and Report Safely. If you spot something questionable, follow internal bug reporting protocols. Screenshots and network logs are helpful, but redact or mask actual sensitive data in your report to avoid compounding the leak.

AI Leak Analyser Solutions: Core Innovation and the Four-Stage Pipeline

If you’re ready for the big leagues of internal leak detection, it’s time to talk about AI-powered solutions. Traditional methods rely on set patterns or keyword spotting, but modern leak analyzers go much deeper. They use machine learning and content awareness to catch not just obvious (or labeled) sensitive data, but hidden exposures that static rules might miss—especially in sprawling Microsoft environments where scale and complexity are the norm.

A true AI leak analyzer brings automation to what would be an overwhelming human task. Think of a pipeline that extracts and inspects vast amounts of corporate email, Teams messages, files, and logs—breaking them down, understanding the context, tagging what matters, and scoring real risk. Microsoft’s cloud ecosystem generates huge volumes of structured and unstructured data, making context key: it’s not just “what is this file?” but “who owns it, where is it, and what business process is it part of?”

The magic lies in four main stages: retrieving content safely across all platforms, extracting relevant entities (like names, contracts, keys), applying contextual analysis based on location and usage, and finally classifying exposure so alerts make sense and don’t drown you in noise. Want to see how new data governance, analytics, and AI come together in Microsoft Fabric? This episode has the full breakdown on unified governance and AI integration.

In the next part, we’ll lay out exactly how each pipeline step works—what gets flagged, how context cuts through false positives, and how automated classification is essential for meaningful alerts—not just another alert fatigue nightmare.

Automated Leak Detection: Content Retrieval, Extraction, and Contextual Classification

- Content Retrieval—Pulling Data Safely. The analyzer starts by ingesting data across cloud services, emails, chat logs, and files—always respecting security policies and access rights. It’s designed to avoid introducing new leaks itself, pulling only what’s permitted and auditing every step. When you have mixed environments (including Microsoft 365, Power BI, and Fabric), safe data governance is a must—just like this breakdown explains.

- Entity Extraction—Finding What’s Sensitive. Next, AI parses the content to extract bits of interest: PII, financials, credentials, code, or business secrets. Built-in natural language processing and pattern matching can even spot entities hidden in unstructured data or poorly labeled files, minimizing what slips through the cracks.

- Context Establishment—Understanding the Story. Here, the analyzer links up who owns the data, what app or device is involved, and what “normal” usage looks like. If a sensitive file is stored in a finance folder accessed by HR, or if data crosses from a secure SharePoint to an open Teams channel, it’s flagged based on context—not just content. This drastically reduces false alarms.

- AI-Backed Classification—The Final Judgment. All those signals come together for intelligent classification. The analyzer scores the exposure—was the file meant to be shared, or is this a real leak? Machine learning models, trained on previous incidents, learn to tell genuine problems from harmless sharing, helping security teams avoid “false nightmare” overload and focus on true threats.

Incident Response: Containing Data Leak Leakage and Preserving Traces

So the worst has happened: you’ve caught an internal data leak. What you do in the first moments determines how bad it gets—and whether regulators come knocking or your business reputation emerges in one piece. Incident response isn’t just for hackers and ransomware; it’s your playbook for locking down leaks, assessing what’s really happened, and preserving evidence for both investigation and compliance reporting.

Containment comes first. That usually means disabling access to compromised files or chat threads, resetting exposed credentials, and temporarily quarantining affected systems or accounts. You need to move quick, but also carefully, so you don’t lose forensic evidence. This is especially true in Microsoft environments where data may be synced, backed up, or mirrored across multiple services at once—sometimes even spanning external SaaS or partner integrations.

After plugging the hole, it’s time to forensically preserve all relevant traces. Download detailed logs, grab snapshots, and document actions taken—because you’ll need to show both what was accessed and how it was remediated. Don’t forget that business impact goes beyond lost data. What’s at stake is your organization’s trustworthiness, potential legal exposure, and the ability to keep operations humming while the mess is addressed. Security in modern cloud platforms like Dataverse relies on proper role-based access and strong governance—see how Dataverse and Entra ID practices protect against external leaks.

Next, you’ll need to work through communication and business continuity, and then put long-term defenses in place to prevent a repeat performance. The following sections walk through how to handle notifications, protect your business, and upgrade your security posture for the future.

Communicate Incident and Ensure Business Continuity

- Craft Transparent Internal Notifications. Quickly inform all relevant teams and executives about the incident. Use clear, non-alarmist language and include details on which data was affected, who can help, and immediate next steps. Draft templates ahead of time—quick reactions are easier with a plan in place.

- Notify External Stakeholders and Partners. If customer or partner data might be impacted, send concise, honest notifications. Be upfront about what you know, what’s being done, and when updates will follow. This manages expectations and preserves trust should the news leak further.

- Maintain Business Operations. Work with IT and operations staff to keep core systems running, using continuity planning to minimize downtime. Spin up clean environments if systems must go offline and communicate any business process impacts to leadership.

- Prepare for Legal and Compliance Fallout. Loop in legal counsel and risk management. Assess regulatory exposure and reporting requirements. For ongoing compliance monitoring, automation in Microsoft Defender for Cloud helps prevent and contain issues faster (see this Defender for Cloud compliance guide).

Long-Term Defenses Against Data Leak Leakage

- Data Loss Prevention (DLP) Policies. Enforce Microsoft 365 DLP across email, SharePoint, OneDrive, and Teams to block the accidental sharing of sensitive information. Align DLP policies to real business usage, and review rules regularly. For hands-on setup guidance, this podcast walks through Microsoft DLP configuration.

- Encryption in Transit and at Rest. Use Microsoft’s built-in encryption options to protect data on disk and while moving between services. Encryption can’t stop every leak, but it’ll frustrate attackers and help meet legal obligations.

- Conditional Access and Role-Based Permissions. Implement Microsoft Entra (Azure AD) Conditional Access policies to ensure only the right people can reach sensitive areas, and review permissions regularly. Don’t over-provision—least privilege keeps the fallout small even if someone messes up.

- Continuous Governance and Ownership Reviews. Use tools like Microsoft Purview to keep tabs on sensitive data, assign clear ownership, and monitor for stale permissions or orphaned files. Governance isn’t “set and forget”—make it a recurring process for every team.

- Ongoing Security Awareness and Training. Teach employees how and why data leaks happen, what bad sharing looks like, and the real costs of cutting corners. Periodic refreshers and simulated phishing or leak exercises keep everyone sharp.

- Automate Routine Security Checks. Regularly scan for misconfigurations, broad sharing, or strange activity using Microsoft cloud reporting and PowerShell. Review what’s being logged (and what isn’t), and aim for defense-in-depth—one missed alert shouldn’t bring you down. If you hit a broken page, don’t panic; redirect to the latest governance advice and podcasts as needed.

Detection Tools (Easy to Advanced): Choosing the Right Solution for Microsoft Environments

- Browser DevTools and API Inspectors (Entry Level). Start with built-in browser tools to view page requests and responses. This lets you see exposed data in API payloads as you use Microsoft web apps. Great for low-hanging fruit and regular internal spot checks.

- Endpoint Detection and Response (EDR) Platforms. Deploy tools like Microsoft Defender for Endpoint across all corporate devices. These solutions monitor data movement on endpoints and watch for risky file transfers, anomalous downloads, and malware attempting exfiltration.

- Managed Detection and Response (MSSP). For organizations without a massive security team, MSSPs provide 24/7 monitoring and specialized detection of internal threats, often integrating directly with Microsoft Defender and Purview for a seamless flow.

- Enterprise DLP and Information Protection. Integrate Microsoft Purview and DLP across your environment for deep data classification, monitoring, and policy enforcement. They connect with conditional access and automate response (for implementation tips, see this Microsoft 365 security guide).

- Cloud-Native and Behavioral Analytics Solutions. Advanced options offer user behavior analytics—detecting not just what’s shared, but who’s sharing, when, and how patterns change. These pick up insider threats, Shadow IT, and subtle internal leaks missed by static controls. For adaptive Power Platform security, see this practical podcast on DLP insider moves.

Record Safe Proof of Concept and Internal Bug Reporting

- Use Redacted Screenshots and Data Dumps. When capturing evidence of a leak, always blur or truncate real sensitive data. Highlight just enough to show the issue without creating an accidental disclosure.

- Reproduce Issues Minimally. Demonstrate the problem using test or demo accounts whenever possible, limiting the fallout if your documentation gets shared wider internally.

- Follow Internal Reporting Templates. Use organization-approved forms or templates for security issues, listing the steps taken, affected systems, and immediate impact. Include timestamped logs and clear descriptions, but never drop proof into chat or email without encryption and secured access.

- Alert Governance and Security Teams Directly. Don’t assume someone else will escalate. Notify the right people (like your Microsoft 365 global admins or security response team) through formal, traceable channels until it’s acknowledged and being addressed.

Evaluating Vendor Security and Supply Chain Data Leak Risks

- Perform Security Questionnaires. Require third-party partners and SaaS vendors to complete security self-assessments that dig into their Azure tenancy, DLP policies, and incident response plans.

- Review Permissions and OAuth Scopes. Regularly audit what external apps are connected to your Microsoft 365 environment. Look for Shadow IT risks, especially with new AI-driven automation tools like Microsoft Foundry (learn about Foundry governance essentials here).

- Monitor Integrated Workloads Continuously. Use Microsoft Purview and Defender for Cloud Apps to track data movement into and out of third-party platforms, prioritizing apps with high access levels or frequent syncs.

- Enforce Data Classification and DLP for Partner Access. Don’t let vendors inherit blanket access—require strong classification and policy checks at both your end and theirs.

Behavioral Analytics: Detecting Insider Threats with Anomaly Detection

Most data leaks don’t come screaming through the front door—they slip quietly out the back. That’s why behavioral analytics is so powerful for rooting out insider threats within Microsoft 365, Azure, and cloud-integrated setups. By studying how users typically work, modern tools pick up on oddball activity—like downloading too many files late at night or suddenly accessing new document libraries.

Think of it like setting a baseline for every user, role, or even department. Security systems learn what’s “normal”—maybe how often Steve in finance downloads monthly reports or how HR staff comb through employee files. When someone goes against that grain, the system flags it. You’ll want to establish these behavioral baselines early, so alarms don’t go off every time someone changes up their routine.

What sparks suspicion? Here are key examples: big data exports after hours, abnormal cloud logins from far-off locations, bulk downloads of sensitive files, or sudden upticks in API calls. Sometimes it’s accidental, sometimes it’s a malicious insider. Either way, catching it early makes all the difference.

Tools like Microsoft Purview Audit go beyond your basic logs. They allow you to build thorough profiles of user actions across the Microsoft ecosystem, making forensic investigations and risk detection much sharper. The goal: Spot the weird stuff before data walks out the door for good.