Enterprise AI Risk Management: Strategies, Standards, and Solutions

Enterprise AI risk management is all about making sure your organization puts the brakes on AI-related risks before things get out of hand. As AI takes on a bigger role in your operations—especially across Microsoft 365, Azure, and all those Copilot tools—so does the need to manage everything from compliance headaches to potential disasters in automation, data leaks, and business continuity.

This topic isn’t just a checkbox for compliance teams. It’s a living process that ties together frameworks, governance, cybersecurity, data controls, and incident response—all designed to help you avoid unwanted surprises (or lawsuits) as oversight and regulations pick up speed. Pulling it off means more than tech—it’s governance, transparency, and a long-term strategy to dodge operational, legal, and reputational damage as your AI footprint grows.

Throughout this guide, you’ll get a grounded tour through the core strategies, standards, and Microsoft-powered solutions you need to build a rock-solid AI risk posture—whether you’re wrangling agents, bots, or predictive models across complex environments.

AI Risk Management Framework

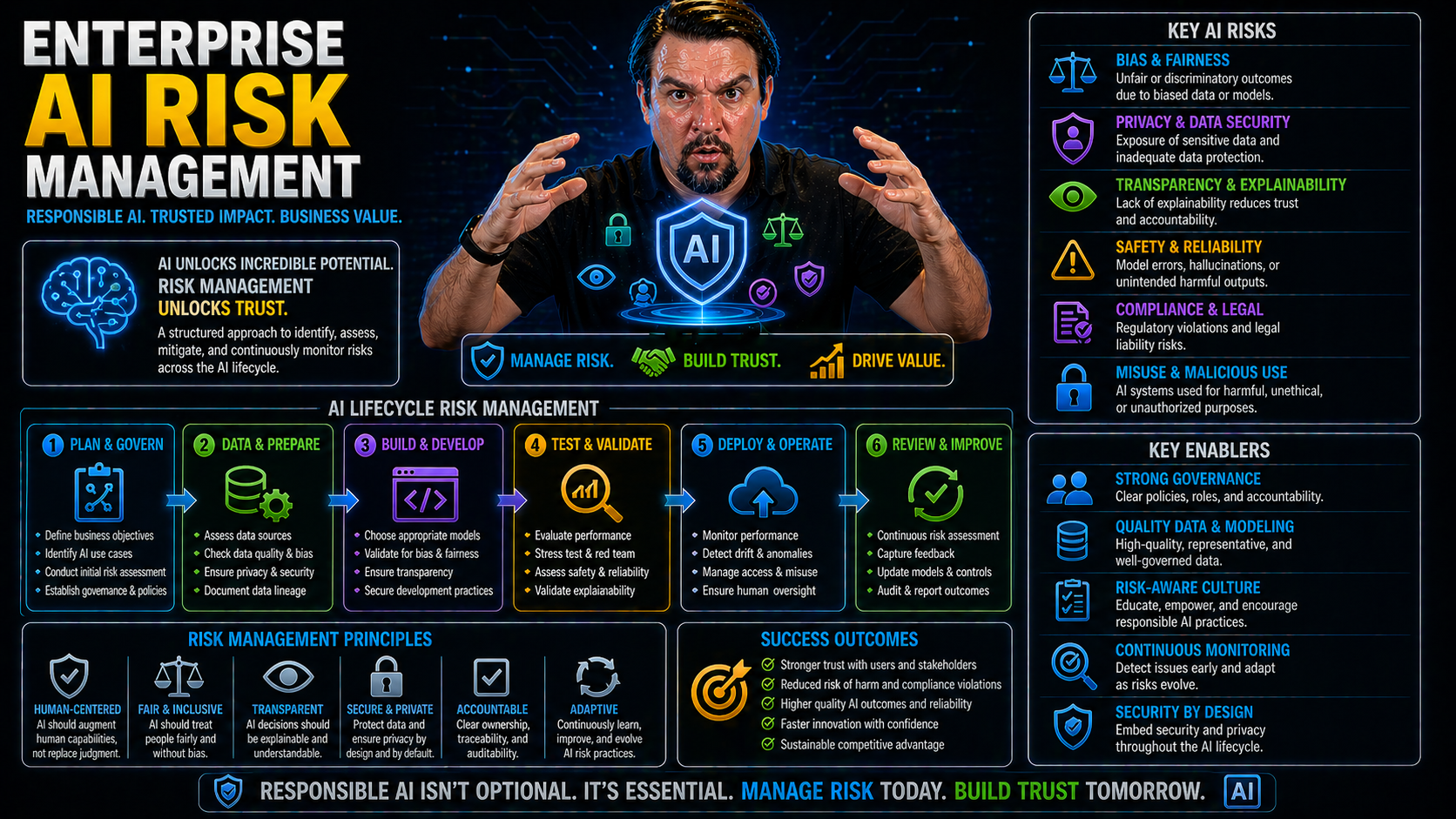

Definition: An AI Risk Management Framework is a structured set of principles, processes, and tools designed to identify, assess, mitigate, monitor, and govern risks associated with developing, deploying, and operating AI systems within an enterprise. It aligns technical controls, organizational policies, and legal or ethical requirements to ensure safe, reliable, and compliant AI use.

Short explanation: In the context of enterprise ai risk management, the framework provides a lifecycle approach that guides stakeholders through risk identification, evaluation, control selection, implementation, continuous monitoring, and reporting. It addresses key risk categories—data quality and governance, model robustness and accuracy, privacy and security, fairness and bias, explainability, and operational resilience—and defines roles, standards, metrics, and escalation paths. By embedding these practices, organizations can balance innovation with risk reduction, meet regulatory expectations, and build trust in AI-driven decisions.

Understanding AI Risk Management Frameworks and Standards

Before you can rein in the wild world of AI risk, you need a playbook—one that brings order to the chaos. That’s where risk management frameworks and standards step in. These frameworks, from trusted names like NIST, ISO/IEC, and industry regulators, aren’t just paperwork. They’re the backbone for every smart enterprise looking to govern their AI responsibly and keep operations safe.

With Microsoft-centric stacks running your clouds and critical workloads, understanding and aligning to standards becomes essential, especially when dealing with hybrid deployments and regulatory requirements crossing different regions. Each framework—whether it’s U.S.-centric or international—has its own approach to assessing, mitigating, and monitoring risks. Yet they all have one thing in common: providing structure, clarity, and actionable steps so your AI investments never wander off into the compliance wilderness.

You’ll see how these standards serve as guides and guardrails, helping you set up policies, governance, and controls tailored to the scale and sensitivity of your organization’s Microsoft workloads. In the next sections, we’ll break down the frameworks leading the way, why they matter, and how you can put them to work—without drowning in complexity.

NIST AI Risk Management Framework Implementation

- Familiarize Your Teams with NIST's Core Functions: The first step is to get everyone clear on the framework’s major pillars: govern, map, measure, and manage AI risks. “Govern” covers the policies and culture, “map” means figuring out how and where AI is used, “measure” digs into risk assessment, and “manage” means your mitigation actions. These pillars help you organize every phase of AI development and deployment.

- Documentation and Inventory of AI Systems: Start by listing all AI workloads, models, and data pipelines operating within your Microsoft environments. Ensure each model (from Copilot prompts to Azure ML models) has clear documentation on intended use, training data sources, and risk profiles. Good record-keeping is step one for both compliance and troubleshooting.

- Risk Identification and Mapping: Map out where AI is used in your workflows and the potential risks that come with each use case. Use NIST’s risk taxonomy to highlight outcomes like bias, adversarial attacks, or data leaks. In Microsoft setups, this means examining M365, Azure AI services, and Power Platform bots for sensitive integration points.

- Continuous Risk Assessment and Monitoring: Build repeatable assessment workflows that allow you to measure risk on a regular basis. Leverage Microsoft-native tools such as Azure Monitor, Sentinel, and Defender for Cloud to gain real-time telemetry, detect anomalies, and adjust controls as needed.

- Integrate with Governance and Compliance Programs: Embed the NIST guidance within existing governance board reviews, usage policies, and operational oversight processes. Regular updates and feedback loops keep your risk management adaptive as Microsoft rolls out new features—or as regulations shift.

- Incident Response and Reporting: Design protocols for documenting, escalating, and remediating AI incidents—model drift, data poisoning, or bad output. This includes “lessons learned” cycles and aligning Microsoft service logs with your NIST-inspired processes for audit and compliance reviews.

ISO IEC Standards and EU AI Act Compliance

- Understand the Landscape of International Standards: The ISO/IEC 23894 and 42001 standards define requirements for managing, monitoring, and documenting AI risks across technology stacks. If you’re operating globally, these are your must-haves for auditing and process alignment—especially with Microsoft’s heavy international footprint.

- Map Internal Policies to Standard Requirements: Compare your current AI governance, security, and data protection policies with what the standards demand. This includes documenting model lifecycle, data provenance, and risk controls within tools like Microsoft Purview, Azure ML, or M365 compliance center.

- EU AI Act Readiness Assessment: The European Union’s AI Act introduces mandatory risk classification, algorithmic transparency, documentation demands, and major penalties for non-compliance. U.S.-based companies with any activity in Europe need to consider these as part of their regular program reviews and update deployment pipelines and Copilot scenarios accordingly.

- Develop & Document Action Plans: Use gap analysis to prioritize remediation—whether it’s adding audit trails, improving model explainability, or setting controls on sensitive model usage. Microsoft’s automation and compliance tools can streamline continuous verification and evidence collection, making audits less of a fire drill.

- Monitor Regulatory Changes: Standards and laws are in motion. Build in periodic reviews of ISO, IEC, and EU AI Act updates, adjusting your controls and policies as needed. Let your Microsoft stack work for you by leveraging policy management features and regulatory change monitoring for peace of mind as the world of AI regulation keeps evolving.

Enterprise AI Risk Management Framework Checklist

This checklist supports enterprise AI risk management practices across governance, data, modelling, deployment, monitoring, and compliance.

Enterprise AI Governance and Oversight

Enterprise AI governance isn’t the sort of thing you can patch together when something goes sideways. It’s the scaffolding that keeps your AI operations honest, accountable, and running smoothly from the inside out. As you scale up AI—think Microsoft Copilot, Power Platform bots, and relentless automation—governance roles and organizational policies become mission-critical for getting ahead of risks before they land you in compliance hot water.

This kind of oversight means more than writing up a playbook and wishing for the best. You need cross-functional leadership, usage contracts, and tools that lock in responsible AI behaviors at every level. Microsoft offers a toolbox for operationalizing all this, with identity management, role-based controls, data policy enforcement, and the behind-the-scenes logging to keep everything accountable.

The sections ahead dive into the practicalities: who’s responsible for what, how to craft enforceable policies (not just nice words on paper), and how real-time registries give you an eagle-eye view on every model doing work on your behalf. Blend that with Microsoft-specific governance strategies—from governing AI agents to strong Azure guardrails—and you’re looking at a playbook for resilience, not just risk avoidance.

AI Governance Roles and Usage Policies

- Establish Core Governance Roles: Key positions include AI Policy Officers (set direction, resolve conflicts), Risk Data Stewards (own datasets, manage access, review for issues), and AI Ethics Leads (champion transparency, fairness, and compliance). All roles should have clear mandates, escalation paths, and ties to business risk leadership. Governance boards act as the last line of defense, overseeing risk intake and ensuring EU AI Act compliance.

- Formulate and Enforce Usage Policies: Spell out what’s allowed with AI, who can access models or outputs, and how data privacy is protected. Use Microsoft governance capabilities like Purview, DLP, and RBAC to make sure these aren’t just suggestions, but active controls. Copilot governance strategies exemplify the blend of licensing, contracts, and technical controls needed at scale.

- Review and Adapt Regularly: As AI use evolves and regulations shift, bring governance boards back to the table to revise policies, run risk audits, and review new AI deployments for compliance by design. Automated monitoring, logging, and usage tracking, powered by Microsoft’s own dashboards and compliance centers, support continuous improvement.

- Enable Cross-Platform Guardrails: Implement consistent guardrails across Microsoft 365, Power Platform, and Azure to avoid policy drift, identity chaos, or unintentional data leaks. AI governance is not just about policies—it’s about system-level controls and integration.

Managing Model Risks with AI Model Registry Systems

- Centralize Model Lifecycle Management: Adopt an enterprise-wide AI model registry where every deployed model—be it Copilot’s predictive engine or a custom Azure ML creation—gets logged, documented, and versioned. This brings visibility to what’s active, in review, or decommissioned.

- Track and Assess Model Risk Factors: Use the registry to capture essential model data: drift scores, audit history, sensitivity classifications, or regulatory risk tags (GDPR, HIPAA, EU AI Act). Platforms like Microsoft Purview and Azure ML let you correlate these markers, supporting better risk evaluation and incident response. Purview’s advanced governance tools are crucial here for continuous monitoring and connector-level data policies.

- Streamline Approval and Review Processes: Make registry entry mandatory before any model gets production rights. Automate workflow triggers for risk review, ethics checks, and compliance audits. For Fabric or Power Platform scenarios, embrace enforced constraints and ownership logs, not just documentation (governance illusions debunked).

- Enable End-to-End Traceability: When something goes wrong, the registry is your forensic launchpad. It provides not only history for compliance but also the ability to rollback or replay decision logic as needed. Lifecycle management here goes beyond technical hygiene; it’s fundamental for legal defensibility.

Technical Risk Mitigation Strategies for AI Systems

The best AI risk policy is useless if you don’t have the technical muscle to back it up. That’s where real-time observability, relentless testing, and cybersecurity come into play. As threat actors wise up and new risks sneak into every update, enterprises running Microsoft-powered AI need to build technical risk mitigation into every level of their stack.

Think of this as your AI immune system. You need layers—monitoring to catch suspicious behavior, secure access controls to block what shouldn’t happen, and automated testing to keep your models in check even as new data or regulations hit. Microsoft Defender, Azure’s diagnostic suites, and fine-grained access policies give you the toolkit to lock down your environment and stay agile in response to whatever’s next.

In the following sections, you’ll see how to weave these technical controls directly into your AI ecosystem, supported by modern best practices. Want to avoid amplified errors from agents, silent failures, or invisible security vulnerabilities? This is where you put those defense strategies to work—especially guided by lessons learned from real Microsoft deployments.

Building AI Observability and Testing Workflows

- Set Up Real-Time Dashboards and Logging: Use dashboards, tight logging, and alerting systems (like Azure Monitor or Power BI) to gain up-to-the-second visibility into AI operations, model inference, and decision outputs. Integrate this with Microsoft Defender for Cloud to unify security, compliance, and operational data (learn more here).

- Automate Testing Across the Model Lifecycle: Build automated unit, integration, and regression tests into every phase of model development—before deployment, during updates, and in ongoing production use. Cover both accuracy checks and compliance aspects to avoid unwanted surprises as you scale.

- Monitor for Model Drift and Data Anomalies: Monitor incoming data and prediction outputs to detect shifts, performance drops, or unexpected behaviors—using ML Ops toolchains, Power Platform analytics, or custom scripts. Real-time alerting lets you respond before small issues become big incidents.

- Enable Incident Replay and Audit Trails: Store event logs and data snapshots so you can reconstruct incidents for analysis, compliance, and remediation. With Microsoft tools feeding audit data to Power BI dashboards, leaders get transparency and early-warning capabilities to prioritize responses and keep leadership engaged.

Technical Safeguards and Cybersecurity Integration

- Harden Access Controls and Authentication: Employ Microsoft Entra ID, strong Conditional Access Policies, and Multi-Factor Authentication to limit who (and what) can access AI pipelines, models, or data. Set inclusive, time-bound policies with clear exception management (Conditional Access guide). Avoid identity sprawl by enforcing a disciplined governance loop (discussed here).

- Lock Down Permissions Using Least Privilege: Only grant the minimum roles needed for service accounts, agents, and users—especially in scenarios using Microsoft Graph or OAuth integrations. Review and revoke stale or broad permissions regularly, and leverage admin consent workflows (OAuth consent abuse explained).

- Encrypt and Segment Data Flows: Secure data in transit and at rest with encryption standards native to Azure, Microsoft 365, and Power Platform. Use network segmentation and environment boundaries, such as separate production and testing tenants, to keep sensitive AI workloads safely isolated.

- Detect and Respond to Threats: Deploy Defender for Cloud and Azure Sentinel for persistent monitoring and incident response. Automate detection of anomalies, adversarial attacks, or unauthorized access—then trigger workflows to alert stakeholders, lock down affected areas, and start post-incident reviews.

Data Governance and Protection in AI Systems

Data is where AI risks most often start—and where they’re hardest to spot. Good data governance goes beyond locking down sensitive files; it’s about putting a discipline in place so your training sets, production data, and AI-derived content stay secure, ethically sourced, and compliant across all your enterprise systems.

Microsoft-heavy organizations face the challenge of orchestrating data controls and privacy protocols across SharePoint, Fabric, Dataverse, Power Platform, and OneLake—each with its own quirks. When you don’t get this piece right, you open your business to operational chaos, legal risks, and AI bias that can be awfully hard to unwind later.

The next sections dive into the foundations: how to enforce data controls, keep your crown-jewel information out of trouble, and catch bias before it snowballs into a business or compliance disaster. Achieving this means stabilizing platforms with practical checklists and data strategies, and leveraging architectures like Microsoft Fabric for scalable, consistent governance.

Data Governance and Data Risks in AI Workflows

- Build a Data Governance Framework: Construct clear policies defining who owns data (not just who accesses), accountability for stewardship, and how permissions work. For sensitive AI scenarios, use Microsoft Dataverse—not SharePoint—for better scale, schema control, and field-level security (see the pitfalls here).

- Prioritize and Label Sensitive Data: Use automated sensitivity labels, cataloging, and data lineage tools (like Purview) to ensure regulated or high-value data sets are tracked and handled correctly—across Microsoft 365 and cloud boundaries.

- Enforce Regulated Data Access and Logging: Distinguish between who owns vs. who accesses, regularly review permissions, and keep audit logs for all major actions. AI assistants like Copilot inherit these controls, so your risk lives at the data layer, not just the app (more on this nuance).

- Monitor for Data Drift and Quality Risks: Continuously assess data freshness, completeness, and lineage, setting up processes to detect and correct issues. This avoids the “garbage in, garbage out” problem that dooms both operational workflows and fairness efforts in AI.

- Respond Rapidly to Data Failures: Build strict protocols for detection, documentation, and recovery when data issues slip through—by enforcing schema discipline, indexed columns, and clear permission models across the board.

Detecting and Mitigating Bias in Enterprise AI

- Regular Bias Audits: Use built-in Microsoft tools or third-party plugins to routinely audit AI model outputs for bias across all key demographic groups and scenarios.

- Quantify and Report Findings: Measure bias using statistical tests and present results in compliance dashboards for leadership and audit teams. Integrate this with Purview and Sentinel for AI-generated content (see how Copilot governance works).

- Automated Mitigation Strategies: Apply model retraining, resampling, or data augmentation automatically when bias is detected, rather than waiting for compliance to flag problematic behavior weeks later.

- Connect Efforts to Business Objectives: Ensure bias reduction is part of business objectives, both for legal compliance and to avoid reputational damage that always costs more to repair than to prevent.

Operational and Business Risk Management in Enterprise AI

Even the best tech teams know AI risk isn’t just about data and code. You’ve got to tackle the business and operational sides, too, or risk getting blindsided by disruptions, cascading system failures, or compliance meltdowns when things don’t go as planned.

Enterprise-scale AI demands a clear-headed approach to risk classification, resilience, and cross-platform impact—especially when your core tools are built on Microsoft 365, Azure, or partner clouds. If one piece buckles, you’ll see the effects ripple throughout Teams, Power Automate, SharePoint—you name it.

The next pieces bring these concepts down to earth: how to spot operational threats early, build resilient business continuity plans, and classify AI projects by their risk so your mitigation efforts match the potential fallout. Don’t get caught out by governing the wrong tool or missing systemic threats—learn from the failures of others (full analysis here) and start thinking “system-first,” not just “tool-by-tool.”

Assessing Operational Risks and Building Resilience

- Thorough Risk Assessment: Map out all key failure points—AI model dependencies, data feeds, and automation chains—using regular reviews and cross-team workshops.

- Business Continuity Planning: Develop business continuity plans covering backup data, alternate workflows, and rapid failover procedures for your most business-critical AI systems.

- Resilient Operating Models: Use frameworks like disaster recovery testing and role-based access reviews to maintain operational clarity and quick recovery from system failures.

- Monitor and Adapt: Continually monitor, measure, and adapt your resilience playbook as deployments and risk profiles change across Microsoft and multi-cloud setups.

AI Risk Classification for Use Cases Like Healthcare and Fraud Detection

- Define Risk Levels by Use Case: Assign “High,” “Medium,” or “Low” risk flags to AI applications—healthcare, fraud detection, or automated hiring get the most scrutiny and controls.

- Assess Sensitivity of Data and Impact: For each project, score based on the sensitivity of data processed and the consequences of error or bias—for example, life-or-death in healthcare, massive financial impact in fraud.

- Apply Tiered Controls: Impose stricter permissions, mandatory human-in-the-loop, and higher auditability for high-risk categories—especially in regulated domains handled by Microsoft-powered workflows.

- Integrate with Governance Workflows: Ensure every use case is logged and tracked as part of your AI model registry, with classification data visible for compliance and governance review cycles.

Ethics and Legal Compliance in Enterprise AI

Nobody wants to be the next headline for an AI system gone wrong. That’s why ethical and legal compliance is at the heart of enterprise AI risk management. It’s more than just a box to check—it’s about building transparency, explainability, and real human oversight into every piece of your AI life cycle.

Your Microsoft deployment—Copilot, Dynamics, Power Platform—should reflect your values, maintain regulatory compliance, and be ready to demonstrate accountability to auditors and the public alike. That means explainable AI, auditable records, human-in-the-loop checkpoints, and proactive identification of all the little legal traps hiding in your contracts and pipelines.

The next sections go deep on how to turn these ideals into process: explainability (because you can’t fix what you can’t understand), human-in-the-loop models, and a practical approach to reducing legal risk—drawing on the latest insight from tax and regulatory regimes like the EU ViDA initiative (see the compliance insights).

Explainability and the Role of Human-in-the-Loop in AI Systems

- Transparent Model Outputs: Use explainable AI (XAI) methods—like SHAP values, LIME explanations, or model cards—to make predictive outputs interpretable for users, auditors, and stakeholders.

- Human-in-the-Loop Oversight: Require human review of key AI decisions—such as loan approvals or hiring shortlists—especially in high-risk scenarios. Embed mandatory checkpoints in your process where humans must validate, override, or investigate model outputs.

- Train and Empower End-Users: Provide ongoing education through governed learning centers or centralized resources to keep staff up-to-date, involved, and accountable for proper AI use (deploy a learning center for even better visibility).

- Auditability for Regulatory Review: Log all critical AI decisions and human interventions to maintain a clear audit trail—key for GDPR, EU AI Act, and other regulatory needs

Legal Risk Mitigation and Compliance Strategies

- Clarify Liability and IP Rights Up Front: Define ownership of models, data, and outputs in every vendor contract and internal policy. For any outsourced AI, demand clear terms on indemnification, IP rights, and accountability in contracts.

- Enforce Data Protection and Privacy Compliance: Apply data minimization, strong access controls, and automated audit trails—made easiest with Microsoft Purview’s extensive logging (audit user activity here)—to comply with GDPR, CCPA, and domain-specific regulations.

- Proactive Regulatory Compliance Monitoring: Track changes in key regulations (EU AI Act, HIPAA, sector-specific rules) and assign ownership to compliance leads for updating risk assessments and action plans.

- Mitigate Insider and Vendor Risks: Use tiered access, ongoing access reviews, and rich monitoring (including Microsoft Sentinel) to detect potential data breaches, model misuse, or compliance failures—especially with third-party integrations.

- Establish Legal Risk Training and Communication Protocols: Make sure business leads, developers, and support teams understand the legal stakes of AI decisions. Training programs, policy refreshers, and regular compliance workshops keep everyone on the same (regulated) page.

Common Mistakes People Make About Ethics and Legal Compliance in Enterprise AI

Below are frequent mistakes organizations make when addressing ethics and legal compliance as part of enterprise AI risk management, with brief explanations and suggested corrective actions.

Mistake: Conducting a single legal review or audit and assuming ongoing compliance.

Why it’s risky: Laws, models, data sources, and business uses evolve—so do risks and obligations.

Mitigation: Implement continuous monitoring, periodic reassessments, and update policies and controls as models or use-cases change.

Mistake: Reusing legacy data protection measures without assessing AI-specific data flows, training sets, or model outputs.

Why it’s risky: AI can infer sensitive attributes, enable re-identification, or produce outputs not anticipated by traditional controls.

Mitigation: Map data lineage for AI pipelines, perform DPIAs/PIAs for model development and deployment, and apply data minimization, anonymization, and purpose constraints where appropriate.

3. Neglecting bias and fairness beyond high-level statements

Mistake: Issuing ethical principles without operationalizing bias testing, remediation, or governance.

Why it’s risky: Undetected or unmitigated bias can lead to discrimination, reputational damage, and legal exposure.

Mitigation: Define measurable fairness metrics, run pre-deployment and ongoing bias assessments, document checks, and require remediation steps before production.

Mistake: Treating vendor or open-source models as black boxes without supply-chain due diligence.

Why it’s risky: Licenses, provenance, training data issues, and hidden capabilities can create compliance and IP risks.

Mitigation: Conduct vendor risk assessments, require model provenance documentation, validate licensing terms, and run independent security and safety testing.

Mistake: Keeping legal/compliance siloed from product, engineering, and risk teams.

Why it’s risky: Misalignment leads to late discovery of issues, unsuitable controls, or business decisions that increase legal exposure.

Mitigation: Create cross-functional governance bodies, integrate ethics and legal reviews into product development lifecycles, and embed compliance checkpoints into release processes.

Mistake: Deploying models without sufficient documentation of decisions, data, model limitations, or intended use.

Why it’s risky: Regulators, customers, and incident responders may require explanations; lack of documentation hampers audits and remediation.

Mitigation: Maintain model cards, datasheets, decision-logging, and test reports; document intended use, performance, and limitations.

Mistake: Assuming a single legal regime applies globally or that current law is static.

Why it’s risky: Different jurisdictions have varying AI, privacy, consumer protection, and sectoral rules; new regulations are emerging rapidly.

Mitigation: Monitor global regulatory developments, perform jurisdictional impact analyses, and design controls that can be adapted to stricter regimes.

Mistake: Believing toolkits (e.g., debiasing algorithms) alone resolve ethical concerns.

Why it’s risky: Ethical issues often involve trade-offs, governance, and human judgment beyond algorithmic adjustments.

Mitigation: Combine technical controls with policy, governance, human oversight, and stakeholder engagement to make value-aligned decisions.

Mistake: Lacking clear procedures, roles, and escalation paths for AI-related harms or legal inquiries.

Why it’s risky: Slow or uncoordinated responses amplify harm, regulatory penalties, and loss of trust.

Mitigation: Define incident response plans specific to AI incidents, assign accountable owners, and rehearse response scenarios.

Mistake: Using obscure notices or vague consent for AI-driven decisions and data collection.

Why it’s risky: Consumers and regulators expect meaningful transparency; insufficient disclosure can violate laws and erode trust.

Mitigation: Provide clear, accessible disclosures about AI use, data practices, and opt-out or human review options where required.

Mistake: Treating ethics as qualitative rhetoric rather than integrating into risk scoring and capital planning.

Why it’s risky: Management cannot prioritize or resource mitigation without measurable risk metrics.

Mitigation: Incorporate AI ethics and compliance metrics into the enterprise risk management framework and tie them to governance KPIs.

Mistake: Assuming engineers, product managers, and executives understand AI-specific legal and ethical requirements.

Why it’s risky: Misuse, improper data handling, or incorrect deployment decisions stem from lack of awareness.

Mitigation: Provide role-based training, clear process guides, and accessible expertise (legal/ethics advisors) for AI teams.

Emerging Challenges in AI Risk Management

Just when you think you’ve got AI risk under control, the landscape shifts again. Generative AI models, new types of autonomous agents, and the rise of shadow AI turn old threats on their head and introduce new kinds of headaches for even the most seasoned IT leaders.

Large organizations, especially those relying on Microsoft’s constantly-evolving stack, face unique governance and security challenges—rogue deployments, ungoverned data lakes, and bot ecosystems that don’t play by the rules. Keeping pace means going well beyond policy and documentation, and embedding controls that catch these risks before they explode.

Up next, we’ll get hands-on about managing generative AI’s curveballs and reigning in shadow AI, pulling in strategies learned from real shadow IT incidents and AI agent governance in Microsoft 365. Brace yourself—these aren’t yesterday’s risks, and your playbook had better be ready to adapt.

Managing Risks of Generative AI and Advanced Agents

- Monitor for Unpredictable Outputs and Misinformation: Generative models can produce outputs that are biased, nonsensical, or outright harmful. Set up pre- and post-processing review pipelines to flag and filter problematic outputs, especially in tools like Microsoft Copilot Notebooks, where new “shadow data lakes” can emerge with untraceable content (more here).

- Detect Compliance Drift and Shadow Data: Generative AI can create derivative data that bypasses normal data classification or sensitivity labelling protocols. Use automated data tagging, regular audits, and default labeling of generated outputs to prevent compliance violations down the line.

- Constrain Agent Identities and Permissions: Use Entra Agent ID, RBAC, and strong data boundary controls in Power Platform environments to stop agents from “drifting” into data they shouldn’t touch. Limit networking, connector scopes, and session persistence to slash the odds of an agent running away from its intended use.

- Regular Review and Testing of Advanced Capabilities: Schedule reviews and “red team” testing for models and agents that evolve through self-learning or have open-ended capability sets, turning up hidden risks before they scale throughout your Microsoft-powered environments.

Addressing Shadow AI, Unauthorized Use, and the AI Ecosystem

- Identify and Inventory Shadow AI: Shadow AI includes any unsanctioned bots, apps, or models running outside established governance. Use Defender for Cloud Apps, Entra ID logs, and Purview DLP boundaries to expose rogue deployments (get a practical plan).

- Enforce Approval Workflows and DLP Boundaries: Require all new AI deployments to go through documented risk and data flow assessments before production access, with DLP and conditional access policies locking down sensitive Microsoft 365 and Azure endpoints.

- Limit App and Agent Scopes by Default: Use the principle of least privilege for AI tools and integrations, and block unapproved HTTP connectors or broad Graph permission scopes, tying governance to real system constraints, not just intentions (read why policy alone doesn’t work).

- Sustain Ongoing Monitoring and Remediation: Run regular Shadow IT discovery sprints, provide staff education about risks, and set up procedures for documenting and remediating violations without breaking legitimate productivity.

AI Supply Chain and Third-Party Risk Management

No enterprise AI risk program is complete without looking outside your own walls. As you ramp up your Microsoft-based AI deployments, third-party vendors and open-source models become regular guests in your digital kitchen—each one bringing their own bag of (potentially nasty) surprises.

Yet too often, these external risks go overlooked, leaving soft spots for attackers, compliance gaps, or operational surprises. Don’t let supplier risk sneak up on you. You need disciplined procedures for evaluating vendors and open-source components—checking for security flaws, legal incompatibilities, and “community model” risks—especially as your business weaves AI into every facet of operations.

In the breakout sections ahead, you’ll get a practical framework for due diligence on vendors, as well as tips for safely leveraging open-source AI in your Microsoft ecosystem. Because if you don’t know where your software comes from, you can’t know what your risks are.

Vendor Risk Assessment for Enterprise AI Solutions

- Security Posture: Audit the vendor’s cybersecurity, looking for evidence of tested access controls, encryption, and incident response capabilities.

- Compliance Credentials: Check for certifications (ISO, SOC, GDPR readiness) and real-world proof of regulatory alignment, not just sales slides.

- Ethical AI Practices: Review the vendor’s approaches to bias mitigation, explainability, and data lineage as part of contract negotiation.

- Operational Resilience: Assess support channels, uptime guarantees, and response SLAs for when—because it’s “when,” not “if”—something goes wrong.

- Contractual Protections: Ensure contracts spell out risk allocation, liability, indemnities, and clear procedures for audits and exit scenarios.

Managing Open-Source AI Model Vulnerabilities

- License Compliance: Confirm that every open-source model used has a clear, compatible license—and all dependencies are properly documented. Avoid accidental IP infringement.

- Patch and Vulnerability Management: Frequently review models for unpatched vulnerabilities or outdated dependencies. Set policies to patch and test updates before production use.

- Bias and Transparency Checks: Review training data and model documentation to expose hidden biases that may have crept in from the community or external datasets.

- Integration Security Scans: Before deploying open-source models in Microsoft systems, run rigorous security and privacy scans to ensure no backdoors or misconfigurations linger.

AI Incident Response and Financial Impact Modeling

Even with the best planning, AI failures happen. The real test is what you do next. That’s where incident response plans and financial impact modeling come in handy: giving your team clear protocols for damage control, rebuilding trust, and making sure budgets (and reputations) don’t take a bigger hit than necessary.

Incident preparedness means more than filing a ticket and hoping for the best. You need stepwise playbooks for everything from model drift to data poisonings—anchored by documentation, replayability, and rigorous analytics for leadership and auditors alike. And on the financial side, it takes quantifying potential impacts, justifying budgets for insurance, and negotiating risk transfers with vendors and partners, so one bad night doesn’t sink the ship.

The next sections lay out how to structure failure response protocols and put numbers around risk exposure. With these in hand, you’ll be ready for whatever chaos tomorrow’s AI systems might throw your way—budget, boardroom, and beyond.

Establishing AI System Failure Protocols

- Incident Detection and Triage: Set up automated alerts and triage procedures for quick identification of anomalies, failures, or suspicious outputs—so you don’t lose precious response time.

- Containment and Documentation: Freeze affected systems, log all activity, and document timelines to provide a clear record for postmortem analysis and compliance reviews.

- Root Cause Analysis and Reproducibility: Use recorded data and model states to replay the failure, enabling precise diagnosis and faster root-cause discovery.

- Remediation and Communication: Deploy established fix procedures (rollback, retrain, apply patches) and engage communication leads to notify affected stakeholders and, if needed, regulators.

Quantifying Financial Impact and Transferring AI Risk

- Identify and Categorize Major AI Risks: Assess the types of events—data breaches, bias incidents, regulatory infractions, operational failures—that can hit your organization in the wallet. Categorization makes it possible to assign risk values and anticipate insurance needs.

- Assign Monetary Values to Risks: Work with finance teams, risk officers, and legal advisors to estimate direct and indirect costs—regulatory fines, remediation expenses, lost revenue, and potential reputational damage. Model scenarios drawing on historical incidents, internal data, and industry benchmarks.

- Budget and Prioritize Based on Exposure: Use this quantification to guide your risk mitigation investments—budgeting for controls, audits, or team expansion in higher-risk areas (see why showback and policy enforcement matter for real accountability).

- Transfer Risk via Insurance and Contracts: Explore cyber insurance policies tailored for AI-related losses, including liability coverage for algorithmic errors or data breaches. Extend coverage through carefully negotiated vendor contracts and risk allocation clauses, holding external partners accountable where appropriate.

- Continuous Re-Evaluation: Review your risk quantification and transfer strategies at least annually or after major incidents, ensuring coverage and controls stay relevant as your AI landscape and regulatory obligations evolve.

Enterprise AI Risk Management — FAQ

risks associated with ai systems and the ai lifecycle

What are the most common risks associated with AI systems in an enterprise?

The most common risks associated with AI systems include model bias and fairness issues, data privacy breaches, security risk from adversarial attacks, lack of explainability, compliance and regulatory violations, operational failures during deployment, and reputational harm. These arise throughout the ai lifecycle from data collection and model training to deployment and monitoring. Effective ai risk management combines governance structure, risk frameworks like nist ai rmf, and technical controls to mitigate ai risk and support trustworthy ai.

How do risks across the ai lifecycle differ from traditional IT risks?

Risks across the ai lifecycle are often dynamic and context-dependent: models change with new training data, performance can drift in production, and outputs depend on the nature of ai and its training environment. Unlike traditional IT, ai products may produce probabilistic outputs, lack deterministic audit trails, and require continuous validation. This demands risk and control processes tailored to the ai lifecycle including data governance, model validation, and monitoring to ensure responsible ai development and trustworthy ai systems.

Which stages of the ai lifecycle are highest risk for enterprise use of artificial intelligence?

High-risk stages typically include data acquisition (privacy and bias), model training (amplification of bias, insecure training), validation and testing (insufficient evaluation, poor generalization), deployment (integration failures, unexpected behavior), and maintenance/monitoring (model drift, degradation). For high-risk ai systems and financial services use cases, stricter controls and oversight, clear risk ownership, and periodic audits are critical to manage ai risk effectively.

nist ai rmf, risk frameworks, and governance and risk management

What is the NIST AI RMF and how does it help manage AI risk?

The NIST AI RMF (nist ai rmf) is a voluntary framework providing principles and guidance to manage artificial intelligence risk throughout the ai lifecycle. It helps organizations by offering a structured approach for identifying, assessing, and mitigating risks, aligning governance and risk management, and promoting trustworthy ai. Implementing the ai rmf core concepts supports effective ai risk management, clarifies risk tolerance, and integrates technical and organizational controls.

How do risk frameworks like the NIST AI RMF support building trustworthy AI at scale?

Risk frameworks establish repeatable processes, roles, and metrics that scale across multiple ai projects. They enable consistent categorizing ai systems by risk level, define requirements for ai, and support governance structure and risk and governance integration. This standardization makes it easier to deploy ai at scale while maintaining trust in ai through monitoring, validation, and documented decision-making aligned with responsible ai practices.

How should an organization set risk tolerance for AI initiatives?

Setting risk tolerance requires aligning enterprise risk appetite with the potential benefits of ai, regulatory obligations, and stakeholder expectations. Organizations should categorize ai systems (e.g., low, medium, high risk), assign clear risk ownership, and define acceptable performance, fairness, and privacy thresholds. Incorporating input from legal, compliance, business leaders, and technical teams ensures the approach to ai risk balances innovation and risk mitigation and supports effective ai risk management.

manage ai risk, mitigate ai risk, and responsible ai practices

What technical and non-technical controls help mitigate AI risk?

Technical controls include data lineage and provenance, robust data validation, model explainability tools, adversarial testing, differential privacy, secure model serving, and continuous monitoring for drift. Non-technical controls are governance structure, policies for responsible ai development, staff training, clear risk ownership, incident response plans, and third-party vendor management. Combining both types creates a comprehensive approach to mitigate ai and maintain trustworthy ai systems.

How can enterprises ensure responsible AI development and deployment?

Enterprises should adopt guidelines for responsible ai development that cover ethics, transparency, fairness, and privacy. Practices include bias testing, inclusive datasets, thorough documentation (model cards, data sheets), cross-functional review boards, and compliance checks before deploying ai systems. Continuous post-deployment monitoring and channels for stakeholder feedback are essential to uphold responsible ai throughout the ai lifecycle.

What role does explainability play in mitigating AI-related risks?

Explainability helps stakeholders understand model decisions, supports regulatory compliance, and uncovers potential biases or failure modes. While not always fully achievable for complex models, using interpretable models where possible, local explanation techniques, and documentation improves transparency. Explainability is a key element of trust in ai and is often required for high-risk ai systems, especially in sectors such as financial services or healthcare.

managing ai risks across industries, ai at scale, and major ai risk management

How does AI risk management differ between industries like financial services and healthcare?

Financial services and healthcare face stricter regulatory scrutiny, higher potential for harm, and more complex compliance requirements. Financial services must manage model risk for lending, trading, and fraud detection with strong controls and auditability, while healthcare must prioritize patient safety, privacy, and clinical validation. Both require domain-specific validation, governance, and documentation, but the scale and nature of consequences shape the approach to managing ai risks across each industry.

What challenges arise when scaling AI across an enterprise, and how can they be addressed?

Challenges include inconsistent data quality, fragmented governance, disparate model development practices, operationalizing monitoring, and version control. Address these by establishing enterprise-wide standards, shared toolchains, centralized model registries, automated testing and deployment pipelines, and a governance structure that enforces risk frameworks and fosters collaboration between data science, IT, and business units to enable trustworthy ai at scale.

What constitutes major AI risk management activities for a large organization?

Major activities include risk assessment and categorization of ai systems, implementing the nist ai rmf or equivalent frameworks, establishing governance structure and clear risk ownership, designing controls for data and model integrity, continuous monitoring and incident management, and training staff on responsible ai practices. Regular audits, third-party assessments, and alignment with regulatory requirements are also key components of a mature approach to ai risk management.

trustworthy ai, ai lifecycle, and implementation of risk

How do you build trustworthy AI systems from design through retirement?

Building trustworthy ai requires integrating ethics, security, privacy, and robustness from the design phase: specifying requirements, selecting appropriate datasets, enforcing data governance, conducting rigorous testing, and ensuring explainability. During deployment, implement access controls, monitoring, and performance validation. For retirement, preserve audit trails, archive models and data securely, and manage decommissioning to avoid lingering risks. Applying these controls throughout the ai lifecycle supports trustworthy ai systems and compliance with governance and risk management expectations.

How important is clear risk ownership in effective AI risk management?

Clear risk ownership is critical: designated owners ensure accountability for risk assessments, mitigation plans, monitoring, and incident response. Ownership should be cross-functional—business leaders, data scientists, compliance, and security teams each play defined roles. Clear ownership streamlines decision-making, clarifies risk tolerance, and enables timely implementation of mitigation measures to reduce artificial intelligence risk across projects.

How should organizations approach third-party and open-source AI components?

Treat third-party and open-source components as part of the ai supply chain: conduct due diligence on provenance, licensing, and known vulnerabilities; evaluate model behavior with your data; apply vendor risk assessments; and include these components in monitoring and patching processes. Contracts should require transparency and controls supporting responsible ai development, and risk frameworks should cover the use of ai technologies from external providers to mitigate ai risk effectively.